😸 The feud running the AI industry

Sign Up

·

Advertise

Welcome, humans.

We told you last week that OpenAI killed Sora. Turns out, every time a user generated a video, they were pulling from a finite pool of compute that OpenAI desperately needed elsewhere. The app peaked at a million users, collapsed to under 500,000, and was

burning $1 million a day

on the way down. Meanwhile Anthropic was quietly winning over the engineers and enterprises that actually drive revenue. Altman made the call.

Picture this. You generate an AI video. It's not quite right, so you generate another. And another. Each attempt feels free, but somewhere in a data center, a GPU is sweating. Compute is the new crude oil. And OpenAI just decided where to drill.

Here’s what happened in AI today:

😺 The WSJ revealed the shouting match that created Anthropic and why the feud is still going.

📰 A grandmother spent 5 months in jail after AI facial recognition wrongly ID'd her.

🍪 Bluesky launched an AI app that lets you build your own social feed with plain language.

🍪 AI is quietly hollowing jobs out task by task.

… and a

whole lot more that you can read about here

.

P.S:

Want to reach 675,000 AI-hungry readers?

Click here to advertise with us.

😺 The Altman-Amodei Feud Explained

You probably know that Anthropic was founded by people who left OpenAI. What you probably don't know is why they left, or how personal it got on the way out. This personal falling-out that became an ideological split that's now shaping trillion-dollar decisions about how AI gets built, who controls it, and what it's allowed to do.

A new

WSJ investigation

by reporter

Keach Hagey

, based on interviews with current and former employees at both companies, gives the most complete account yet of how it started. Here's the arc.

It started as a disagreement about principles…

Dario Amodei joined OpenAI in 2016, eventually rising to VP of Research and becoming a key architect of GPT-2 and GPT-3. But behind the scenes, he and Sam Altman were increasingly at odds over one core question. How fast should you move, and who should be in charge?

The cracks widened fast:

The Russia/China proposal.

Co-founder Greg Brockman floated a fundraising plan that included selling AGI access to rival nations. Dario reportedly considered it borderline treasonous and nearly quit on the spot.

The broken promise.

Altman assured Dario that Brockman and Ilya Sutskever (OpenAI's chief scientist at the time) wouldn't have authority over him. Dario later discovered a quiet handshake deal giving both the power to fire him.

The confrontation.

In 2020, Altman called Dario and his sister Daniela into a conference room and accused them of organizing negative board feedback against him. It ended in a shouting match.

Dario's conditions to stay were simple. Report directly to the board, and never work with Brockman again. Both were rejected. He left in 2021, taking Daniela and a dozen other OpenAI employees with him to found Anthropic.

At its core, the split came down to this. Dario believed you could scale AI fast AND build it safely. Altman believed speed and market dominance came first. They never resolved it. They just built two separate companies around it.

And if you thought that settled things, the WSJ report ends there but the feud absolutely did not.

The same disagreements about control, safety, and power now show up in every major public decision both companies make. Just in February:

The Super Bowl shots.

Anthropic ran a four-ad campaign

with one message front and center.

"Ads are coming to AI. But not to Claude."

A direct dig at OpenAI's plan to run ads in ChatGPT. Altman publicly called the ads "clearly dishonest." Marketing professor Scott Galloway's verdict was blunt. Market leaders don't acknowledge the competition. Altman blinked.

The India photo op.

At an AI summit, India's Prime Minister Modi tried to manufacture a unity moment with rival CEOs, hands raised together. Google's Sundar Pichai and Meta's AI chief joined in. Altman and Amodei

raised separate fists

and didn't make eye contact. The internet had a field day. Altman later said he was "just confused." Sure.

The Pentagon standoff.

When the Defense Department came calling, the two companies went in opposite directions, revealing exactly where their priorities lie.

Anthropic refused

to sign without hard safeguards against autonomous weapons and mass domestic surveillance. OpenAI signed hours later. Defense Secretary Hegseth responded by threatening to designate Anthropic a national supply chain risk, which would effectively bar it from working with any government contractor.

Privately, the rhetoric is even harsher. Per the WSJ, Dario has reportedly compared the Altman-Musk lawsuit to "Hitler vs. Stalin" internally, called co-founder Brockman's $25M donation to a pro-Trump super PAC "evil," and likened OpenAI to a tobacco company.

Why this matters:

Two companies valued north of $300B are making decisions on ads, weapons contracts, and who gets access to the most powerful AI in the world. Those decisions trace back to a shouting match in 2020. And the gap keeps widening. This week, leaked Anthropic documents revealed a new model called Claude Mythos that's described as "dramatically" ahead of anything else on the market. It's also described as "very expensive to serve." Meanwhile, Claude users on $100/month plans are already hitting rate limits within an hour. The more powerful the model, the harder it is to keep it accessible. That's exactly what Dario said he was leaving OpenAI to fix.

Our take:

Dario built Anthropic around the idea that safety and scale aren't opposites. But right now, his most powerful model is too expensive to serve, his paying users are hitting walls, and his company just got threatened by the Pentagon for standing on principle. OpenAI, meanwhile, signed the defense deal, is running ads, and is winning the distribution war. The irony is hard to miss. The "responsible" path is turning out to be the harder business. Whether that changes, or whether Anthropic's bet eventually pays off, is probably the most important question in AI right now.

FROM OUR PARTNERS

The most critical move of 2026? Operationalizing your company’s shared knowledge

Most teams have the knowledge. They just can’t use it.

Atlassian

Rovo

connects your company’s docs, projects, code, decisions, and people into a single, permissions‑aware layer you can tap into instantly. And because

Rovo

lives where your teams already work, it doesn’t just help you find answers — it helps you do the work:

Ask

Rovo

to turn meeting notes into a project plan in Confluence and trackable action items in Jira

Ask

Rovo

to pull the right docs, decisions, and data to ramp onto a new project fast

Ask

Rovo

to summarize project updates across all your tools and send it to your team so everyone stays in sync without another status meeting

See how customers like Domino's and FanDuel are becoming AI‑native teams with

Rovo

.

With

Rovo

, anyone can go from AI novice to AI native, just ask.

👉

See how it works

🎓 AI Skill of the Day: The 5-Step Framework for Building Reliable AI Agents

Most people build AI agents by telling them

what

to do.

Yuri Kramarz

, Principal Engineer at Cisco Talos, says that's exactly why most agents fail.

His fix is deceptively simple. Give your agent an identity, define its boundaries, and force it through a structured thinking loop before it outputs anything.

Here's the full framework:

1. Give it an identity.

Not philosophical. Practical. "I analyze customer feedback to surface product improvement opportunities" will outperform "I help with feedback" every single time. One sentence of purpose changes everything.

2. Define what it doesn't do.

This is where most people fail. Write down what your agent does, then write down what it won't do. "I summarize documents. I will not make recommendations." That one line kills half the hallucinations people complain about.

3. Force it through Observe, Reflect, Act.

Observe.

What are the facts in front of you?

Reflect.

What do they mean together? What's missing?

Act.

Based on that synthesis, what's the right output?

4. Build in a validation checkpoint.

Before delivering any output, the agent should ask itself: Am I sure? What would make this wrong? Is this complete and accurate? The agents that perform best in production aren't the cleverest. They're the ones that double-check their work.

5. Be honest about limitations.

Build it in explicitly. "I cannot analyze images." "I may miss context from conversations I haven't seen." "Complex legal questions require additional review." This isn't weakness. It's reliability.

Our favorite insight:

Clarity beats clever. Every time. For agents and humans alike.

Want more tips like this? Check out our

AI Skill of the Day Digest

for this month.

Have a specific skill you want to learn?

Request it here.

Trending: Three popular Neuron podcast eps…

New episodes air

every week

on:

Spotify

|

Apple Podcasts

|

YouTube

|  |  |

🍪 Treats to Try

*Asterisk = from our partners (only the first one!).

Advertise to 675K+ readers here

!

*

Atlas Learning Hub

offers videos, courses, and documentation to level-up your MongoDB skills, from schema design to search and AI.

Podcast Transcript

lets you search Spotify or Apple Podcasts, pick any episode, and get a full AI-generated transcript and summary in minutes — no uploading required.

Ordo

saves any link, video, or article you share to it in one tap, then automatically organizes everything into folders and tags so you can actually find it later.

Grok Imagine V2

by xAI turns text prompts into 4K videos up to 30 seconds long or photorealistic images, with synchronized audio, sound effects, and the ability to animate any photo you upload.

Pensieve

connects to all your company's existing tools (docs, messages, calls, code) and builds a living knowledge graph of your entire business, so AI agents can operate with full context instead of isolated fragments.

📰 Around the Horn

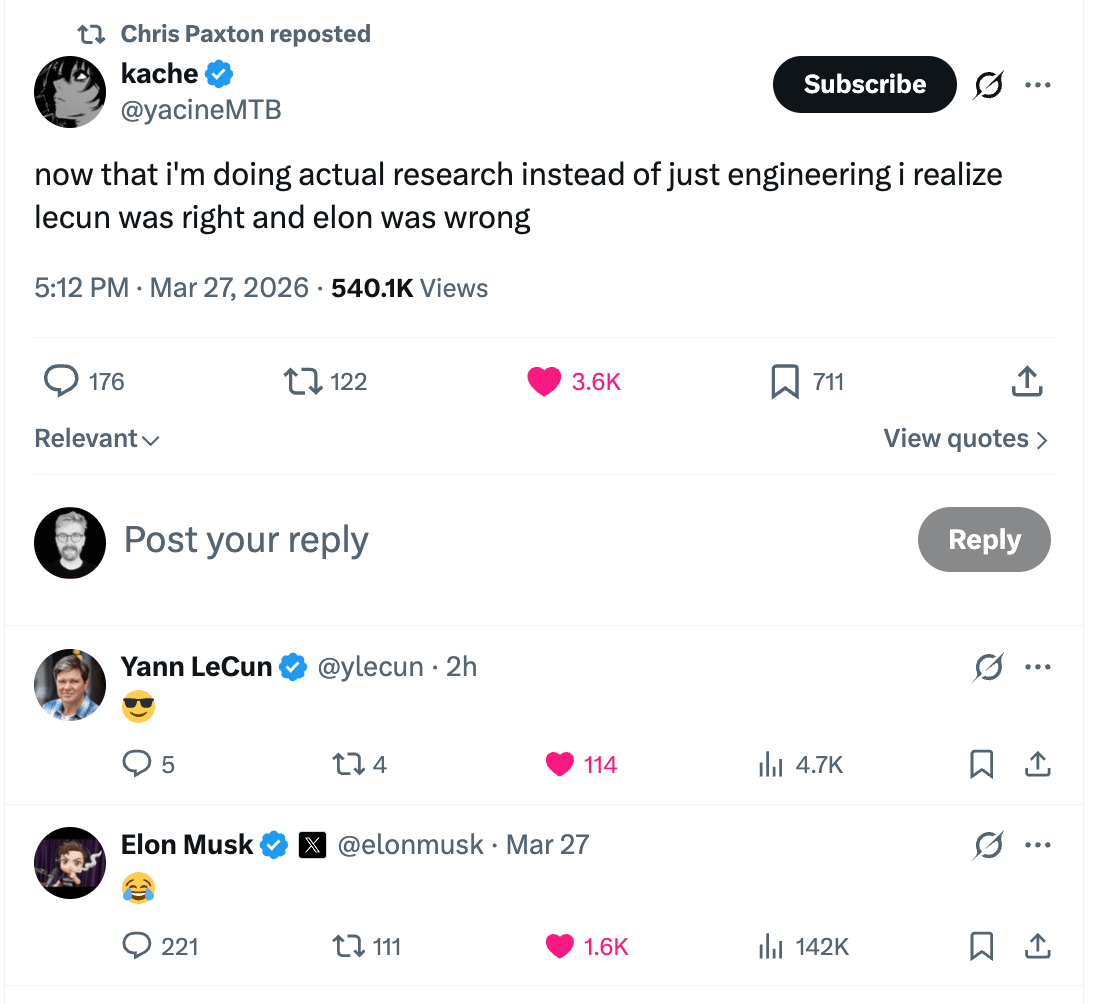

The researchers have spoken. LeCun: 1, Musk: 0.

A

new research paper

argued AI isn't killing jobs outright but "unbundling" them into narrower, lower-paid tasks with workers in weak-bundle roles like coding support or ticket handling most at risk of having their jobs quietly hollowed out.

A Tennessee grandmother

spent five months in jail

after police used Clearview AI's facial recognition to wrongly link her to bank fraud committed in North Dakota, a state she says she'd never visited.

Bluesky

launched Attie, a standalone AI app powered by Claude that lets anyone build custom social feeds using plain-language commands, with plans to eventually let users vibe-code their own apps on the AT Protocol.

A developer published a detailed breakdown of how

ChatGPT's bot detection works

, revealing that every message triggers a hidden Cloudflare program that checks 55 properties across your browser, Cloudflare's network, and ChatGPT's React app state — meaning bots that spoof browsers but don't fully render ChatGPT will fail.

A developer built a

fully functional browser-based

digital audio workstation using an open-source multi-agent coding harness over 20 hours of unattended autonomous work, then compared it to Anthropic's own internal version, which cost $124 in API credits and took under 4 hours but produced a less complete result.

Want absolutely EVERYTHING that happened in AI this week? Click here!

FROM OUR PARTNERS

How to Get Started with Agentforce in Slack

Bring AI agents right into the flow of work — no new logins, no context switching, just smarter collaboration where it already happens. Our new guide walks you through everything from first setup to advanced custom builds.

Get the guide

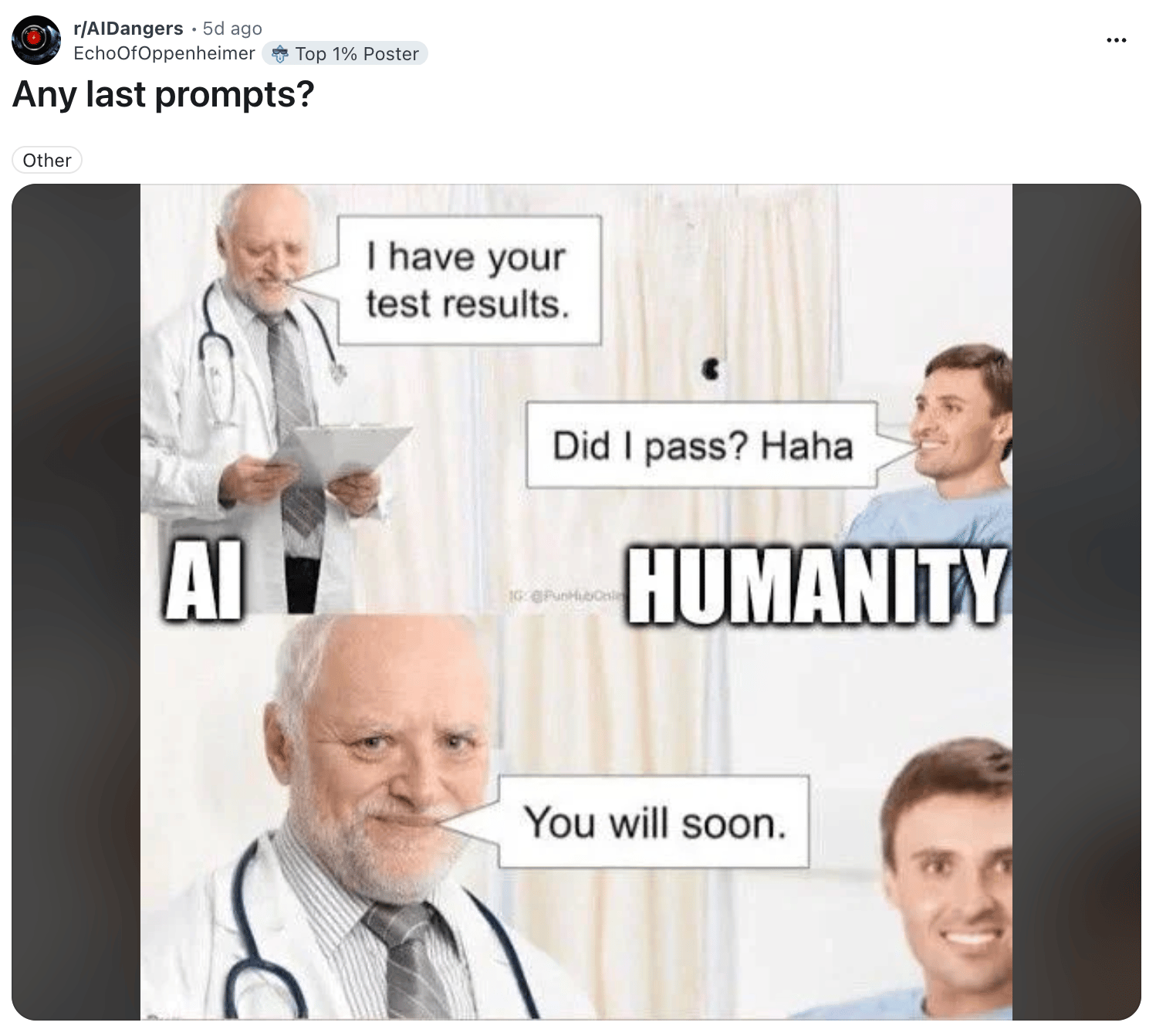

😸 Monday Meme

The Turing test was supposed to go the other way, buddy.

A Cat’s Commentary

|

|

P.S:

Before you go… have you subscribed to our YouTube Channel? If not, can you?

Click the image to subscribe!

P.P.S:

Love the newsletter, but only want to get it once per week? Don’t unsubscribe—

update your preferences here

.