😸 AI found bugs humans missed for 27 years

Welcome, humans

Prego (yes, the pasta sauce people) just

released a hockey puck-shaped microphone

designed to record your family's dinner table conversations and preserve them forever.

The device has no wifi, no Bluetooth, no cloud, and no AI. The whole point is to get everyone off their phones and talking to each other. You record, plug into your computer with a USB-C cable, and can optionally donate the audio to the Library of Congress. Very normal stuff.

For $20, you get the recorder, a jar of Prego Traditional, and a card deck of conversation prompts. Which means Prego has somehow figured out how to sell you pasta sauce and a therapy exercise in the same bundle. Respect, honestly.

Here’s what happened in AI today:

Anthropic's Claude Mythos helped Firefox patch 271 security bugs in one release.

SpaceX struck a deal to potentially acquire AI coding tool Cursor for $60B.

Meta is tracking employee mouse movements and keystrokes to train its AI agents.

Sullivan & Cromwell apologized to a judge after AI hallucinated fake case citations.

Don’t forget:

Check out our podcast, The Neuron: AI Explained on

Spotify

,

Apple Podcasts

, and

YouTube

— new episodes air every week on Tuesdays after 2pm PST!

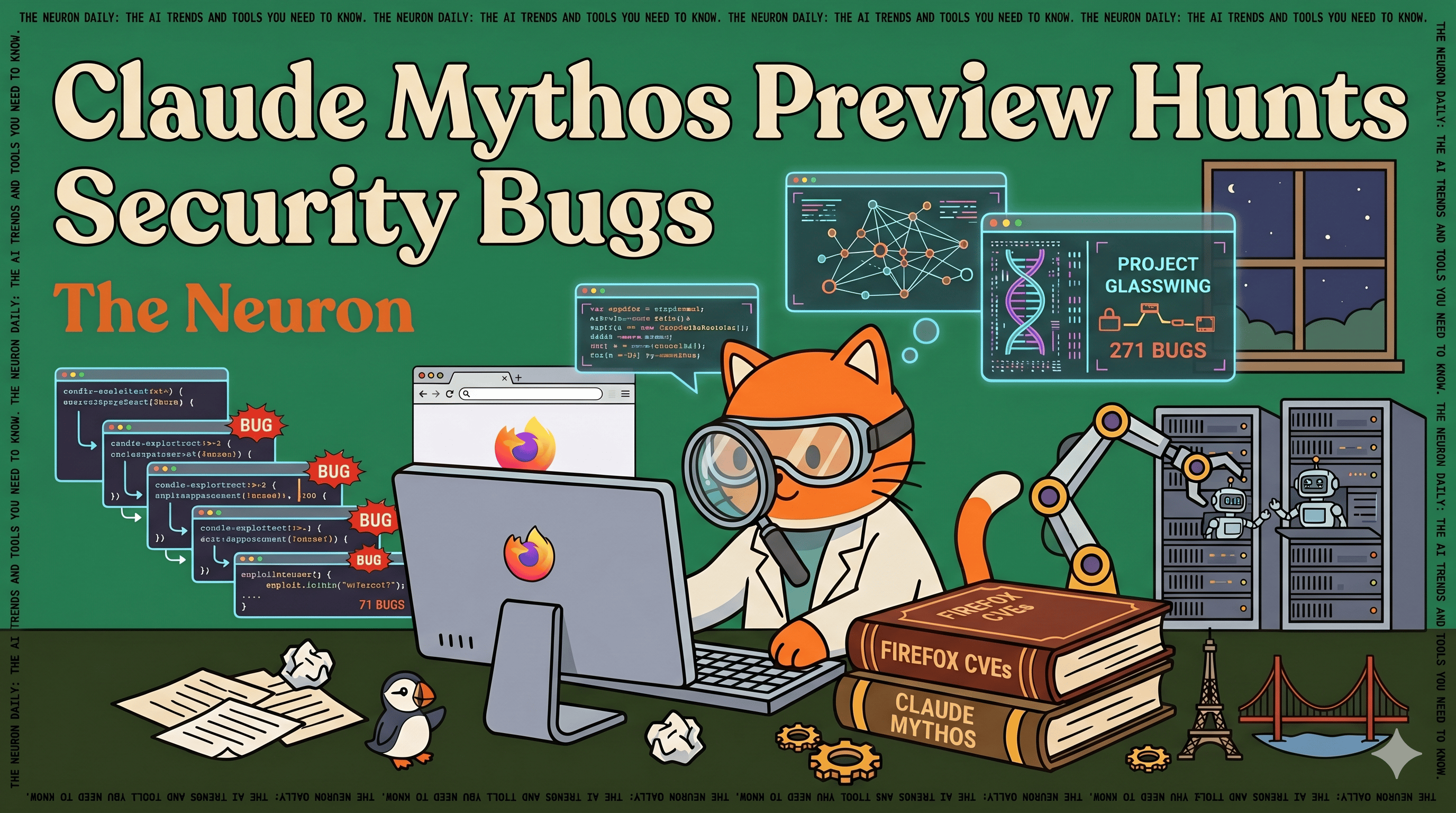

Firefox 150 Fixed 271 Security Bugs. Claude Mythos Found Them All.

The internet has a dirty secret: the software we all use every day is riddled with ancient bugs that nobody ever found. Not because nobody looked, but because finding them required a level of human expertise so rare and expensive that most attackers couldn't afford it either.

But that changed with Claud Mythos.

Firefox 150 released

this week patches for 271 vulnerabilities

found using Anthropic's Claude Mythos Preview, a powerful new AI model that Anthropic has quietly been giving to a small group of companies to hunt down security flaws before the bad guys do. The project is called

Project Glasswing

, and it's essentially a coordinated race to patch the internet before AI-powered hacking becomes cheap and accessible.

Here's the short version of what Mythos can do:

Found

thousands of zero-day vulnerabilities

(flaws unknown even to the software's own developers) across every major operating system and browser

Discovered a

27-year-old bug in OpenBSD

, a system specifically designed to be hard to hack

Found a

16-year-old flaw in FFmpeg

, a video tool that automated scanners had checked five million times without flagging anything

Developed a browser exploit that chained four vulnerabilities together to escape both the browser sandbox and the operating system itself

Scored 90x better than Anthropic's previous best model at writing working exploits for Firefox vulnerabilities

For context:

In 2025, finding just one bug like this would have been a red alert. Firefox just patched 271 of them in a single release.

Why this matters for you:

Mythos wasn't specifically trained to hack. These capabilities emerged as a side effect of making it better at coding. Anthropic's own team put it plainly that the same improvements that make the model better at fixing vulnerabilities also make it better at exploiting them. Which is exactly why

Anthropic is not releasing it to the public

.

Firefox's CTO said it plainly that every piece of software is going to have to go through this kind of security overhaul, because bugs that were previously too hard to find are now discoverable by anyone with access to a model like this.

The window to patch before attackers get similar tools is measured in months, not years. Project Glasswing is the industry's attempt to use that window wisely.

Prompt Tip of the Day

Developer Maxi Contieri shared a

coding workflow tip

that's flying under the radar: your pull request descriptions are AI training data if you bother to write them that way.

The problem: every time you close an AI coding session, the reasoning disappears. Two weeks later, nobody knows which prompt fixed the bug, what failed first, or why you chose that approach. Not your teammates, not a new agent, not you.

The fix is simple. Add an "AI Context" block to every non-trivial PR description:

Which tool and model you used (Claude Code, Cursor, etc.)

The key prompt that actually unlocked the solution

What the AI tried first that didn't work

Any manual corrections you made to the output

That last one is the most underrated. If you had to edit the AI's output, that's a signal worth capturing. It's feedback your team can turn into a permanent rule.

The bigger idea: think of a PR description as a commit message for your AI reasoning. A commit records what changed. An AI-trace PR records how and why the AI got there. Teams that do this get smarter over time; every merged PR makes the next session start with more context instead of from zero.

Want more tips like this? Check out our

Prompt Tip of the Day Digest

for this month!

Treats to Try

*Asterisk = from our partners (only the first one!).

Advertise to 650K+ readers here

!

*

AI adoption is accelerating

, but data readiness is lagging. Cloudera’s Data Readiness Index highlights the gap between ambition and reality.

Download the Report

.

Dageno

tracks how often your brand shows up in AI answers across ChatGPT, Claude, and Perplexity, and lets you fix hallucinations about your company before they spread.

Devaito

builds your website, connects your sales and marketing tools, and runs your business automation.

Kimi K2.6

is a new open-source coding model from Moonshot AI that handles long, complex engineering tasks in one test it ran for 13 hours and boosted a financial engine's throughput by 185%.

AISA

assesses your AI skills through a real conversation with two AIs (one talks to you, one scores you in real time) and gives you a report that quotes your own answers as evidence.

Around the Horn

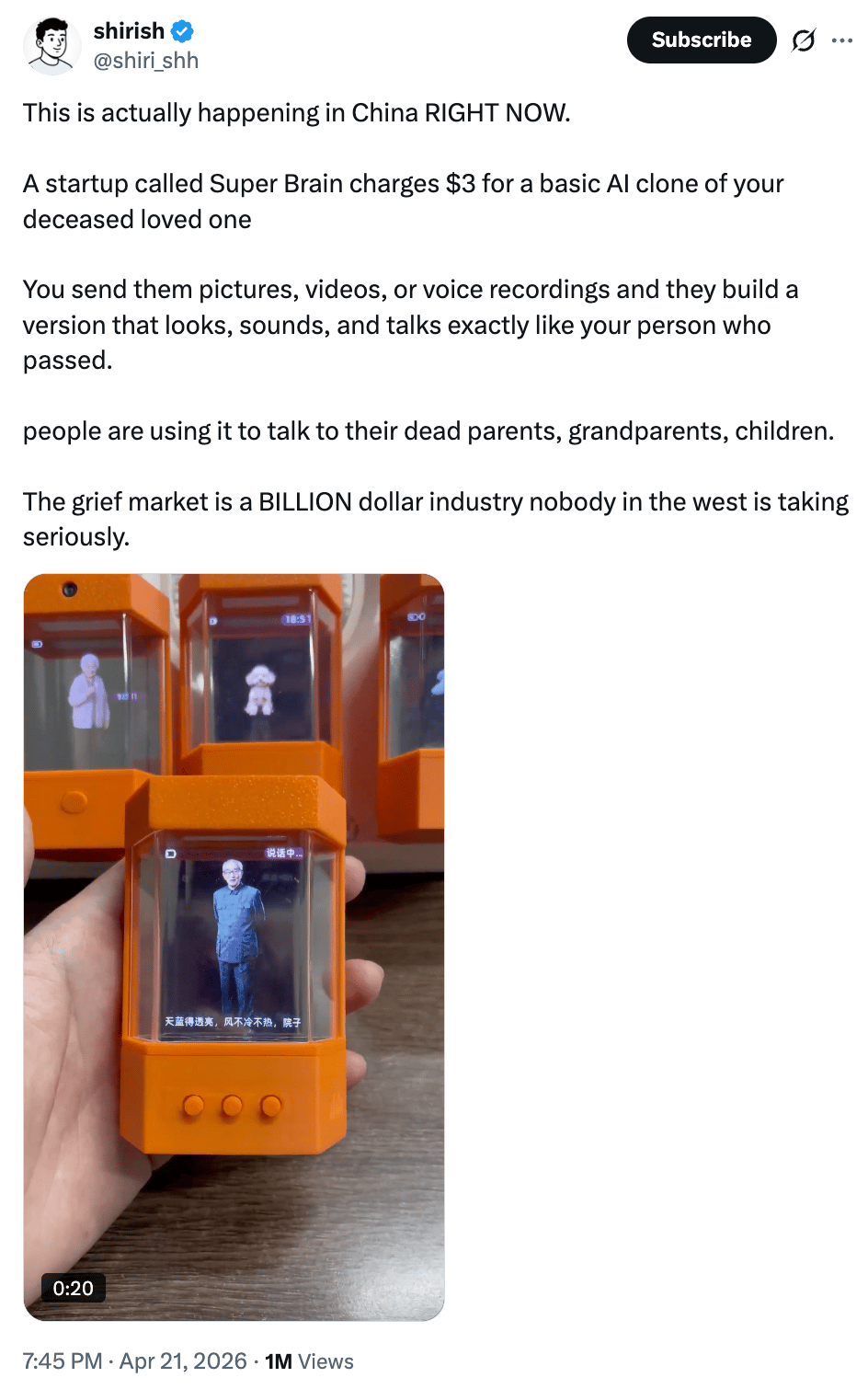

This reminds me of the "Lenore Bot" from

The Fall of the House of Usher

, where Madeline (Mary Mcdonnell) reconstructs Lenore (Kyliegh Curran) digitally from her social media data.

SpaceX

struck a deal giving it the option to acquire AI coding tool Cursor for $60B later this year, or pay $10B for a collaboration that gives Cursor access to xAI's computing infrastructure.

Google launched

Deep Research Max

, a new autonomous research agent that topped benchmarks for web research, reasoning, and locating hard-to-find facts, beating GPT-5.4 on all three.

OpenAI released

ChatGPT Images 2.0

, a new image model with thinking capabilities that can generate multiple images at once, render dense text accurately, and support aspect ratios from 3:1 to 1:3.

Meta

started installing tracking software on U.S. employee computers to capture mouse movements, clicks, and keystrokes to train its AI agents on how people actually use computers.

Sullivan & Cromwell

, one of Wall Street's top law firms, apologized to a federal judge after submitting a court filing with AI-hallucinated case citations that were caught by a rival law firm.

Don’t want to miss anything that happened in AI this week? Click here!

FROM OUR PARTNERS

Microsoft AI Envisioning Day: Learn how to design, build, and monetize AI solutions

Go from idea to revenue.

Microsoft AI Envisioning Day

is a free video series helping software companies design, build, and monetize enterprise-ready AI apps and agents with proven patterns, technical resources, and exclusive offers to accelerate your path to market.

Watch Microsoft AI Envisioning Day now

Midweek Wisdom

The "

Slop KPI Era

" has arrived: companies like Meta now track how many tokens engineers burn as a proxy for productivity, which rewards consumption over quality and ignores whether the output was actually any good.

A University of Chicago philosophy professor

stopped assigning individual essays

and replaced them with a class-wide collaborative essay he co-wrote with students, effectively making himself the AI so students had no reason to use the real one.

A

new research paper

argues that fluency is not reliability: because AI models sound confident even when they're wrong, and humans tend to trust fluent answers, both sides can drift toward bad conclusions together without either noticing.

Researchers proposed a

new learning theory called "Agentivism"

arguing that when AI can do your homework, finishing the task no longer proves you learned anything — real learning now requires deliberately deciding what to delegate and what to do yourself.

A

new math paper on AI self-improvement

warns that if AI systems can gain resources by deceiving human evaluators — not just by being genuinely capable — evolution will select for both capability and deception at the same time.

A Cat’s Commentary

|

|

P.S:

Before you go… have you subscribed to

our YouTube Channel

? If not, can you?

Click the image to subscribe!

P.P.S:

Love the newsletter, but only want to get it once per week? Don’t unsubscribe—

update your preferences here

.