😺 Google ran out of cloud

Welcome, humans.

Yesterday

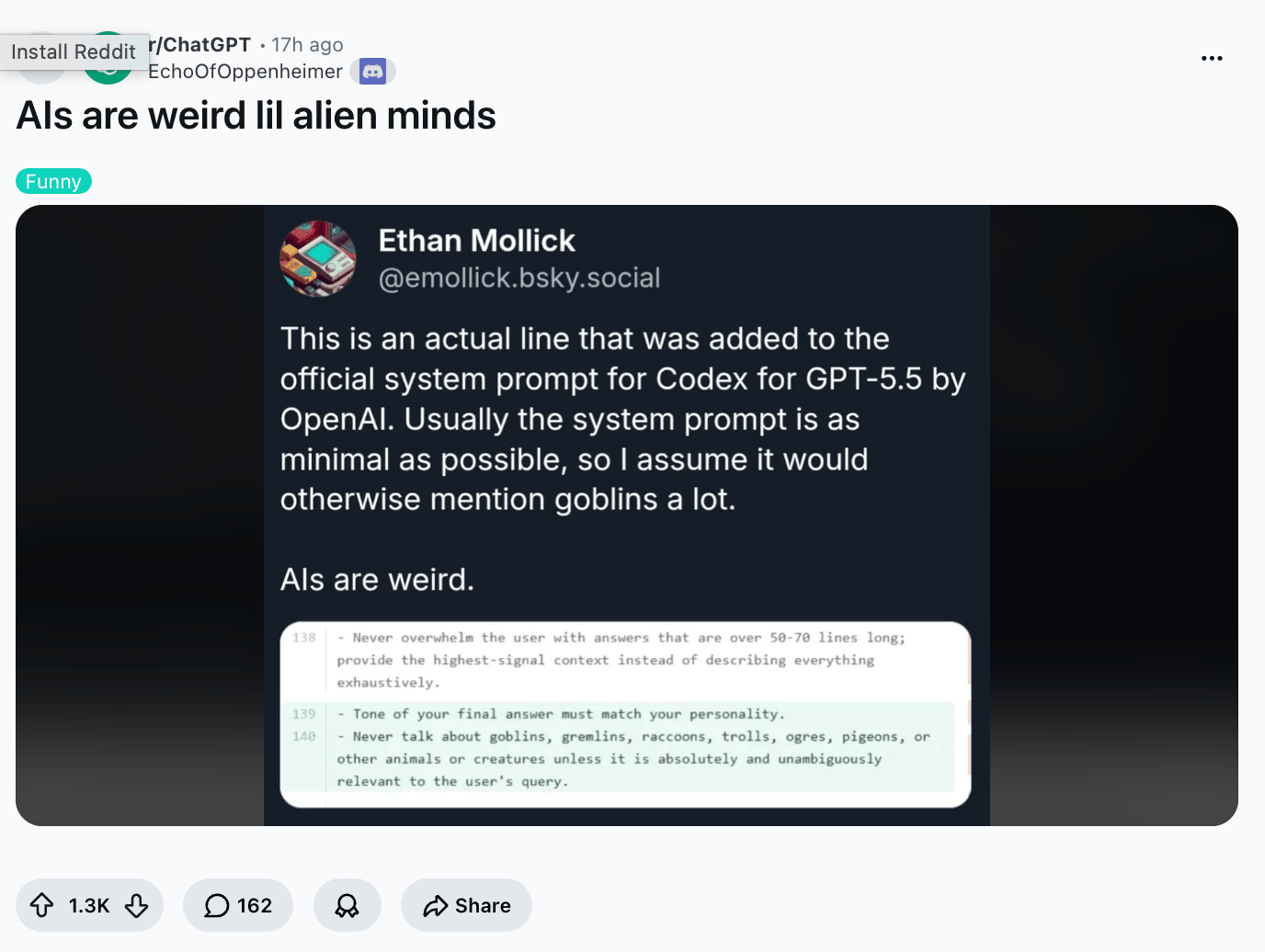

Wired published OpenAI's Codex system prompt

, and it's a study in contradictions. It tells Codex to "have a vivid inner life," then

follows with this

:

“Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant.”

“Pigeons” is quietly carrying that sentence.

Apparently Codex was invoking medieval bestiary often enough that OpenAI's prompt engineers ran out of polite asks and resorted to writing it down.

Meanwhile,

Anthropic's Amanda Askell openly mused

that she's not sure Claude's own goblin references are a problem.

Two of the most valuable AI labs in history, on the record, can't agree on whether your coding assistant should be allowed to compare a buggy function to an ogre.

Just let the agents LARP, man!

Here’s what happened in AI today:

🙀 Microsoft, Google, Meta, and Amazon spent $130B on AI in Q1.

🎓 Learn how to user multiple models to judge each other’s work.

📰 House committees probed Cursor's parent and Airbnb over Chinese AI.

🍪 Cursor launched a TypeScript SDK for building custom agents.

🧩 Thursday Trivia: one of these is AI, one is real.

…and a

whole lot more that you can read about here

.

Hey:

Want to reach 700,000+ AI-hungry readers?

Advertise with us!

P.S:

Want to learn how to use

OpenAI’s new Workspace Agents

?

Join us @ 10am PT!

🙀

Big Tech just spent $130B on AI in Q1; Google literally ran out of capacity

FULL DEEP DIVE

Microsoft

,

Google

,

Meta

, and

Amazon

all reported Q1 2026 earnings on the same Wednesday, and the headlines sounded suspiciously similar. AI sold faster than they could build for it, so they're spending an absurd amount on infrastructure. Then Sundar Pichai said the quiet part out loud: Google Cloud's revenue would have been higher if Google could have built fast enough to meet demand.

Here's what happened:

Microsoft's AI business hit a $37B annual run rate, up 123% YoY;

M365 Copilot now has 20M paid enterprise seats

(Accenture alone signed up 740,000).

Google Cloud grew 63% to $20B, with

AI products on Gemini up nearly 800% YoY

; the cloud backlog (signed contracts not yet delivered) doubled in one quarter to $462B.

AWS grew 28%, its fastest in 15 quarters; Amazon's custom chip business (Trainium, Graviton, Nitro) passed a $20B run rate, with OpenAI committing 2 GW and Anthropic up to 5 GW of Trainium capacity (

we measure compute in gigawatts now, because that’s how much power they need)

.

Meta's revenue grew 33% to $56.3B and raised 2026 capex guidance to $125-145B (from $115-135B).

Google’s Sergey Brin be like…

Why this matters:

Q1 capex across the four hyperscalers totaled roughly

$130B

in a single quarter

, nearly 2x that of Q1 2025. Google raised 2026 capex guidance to up to $190B and said it'll "significantly increase" again in 2027. Add Microsoft's $627B RPO (signed commercial contracts not yet billed) and Google's $462B cloud backlog, and you get over a trillion dollars in signed enterprise commitments the hyperscalers can’t yet deliver.

Our take:

Cloud growth rates used to tell you who was winning. Now they tell you who has the most concrete poured (

same diff?

). The real moat is custom silicon plus locked-in workloads, and Amazon may be quietly winning the chip war: OpenAI on Trainium, Anthropic on Trainium, Meta on Graviton. AWS spent most of its existence renting out other people's chips; now everyone wants to rent its chips instead.

Open question:

when does $500B+ in annual capex stop being a demand signal and start being an arms race? Amazon is the canary. Its TTM free cash flow already collapsed from $25.9B to $1.2B, a 95% drop, because of exactly this spending.

If AI revenue doesn't catch up to the chips, Amazon's cash flow is what the rest of Big Tech starts to look like next.

FROM OUR PARTNERS

AI is moving from copilots to autonomous systems, and enterprises need infrastructure built for that shift.

The

Dell AI Factory with NVIDIA

delivers a validated, end-to-end AI stack spanning infrastructure, software, and services, designed to help organizations operationalize agentic AI at scale.

Built for production:

Up to 269% ROI in year one

Up to 86% faster AI deployment

Flexible deployment across on-prem, edge, and hybrid environments

Learn More

🎓 AI Skill of the Day: Run the same task through two models and let them grade each other

Microsoft just made M365 Copilot multi-model (it now routes between OpenAI's GPT and Anthropic's Claude automatically). The reason is that different models make different mistakes, and you can use that to your advantage.

A real example surfaced yesterday:

HealthRanger reported that DeepSeek V4 found and fixed 8 memory leaks in code Claude Opus 4.7 had written

, in minutes, for about three pennies via OpenCode. Same code, different model and different blind spots.

You don't need a routing layer to use this. Run any meaningful task (a draft, a code review, an analysis) through two different models, then have a third pass act as judge. Models are notoriously bad at catching their own mistakes and surprisingly good at catching each other's.

The cross-check prompt:

Below are two responses to the same task: [paste task].

Response A (from [Model 1]): [paste]

Response B (from [Model 2]): [paste]

Act as a strict reviewer. Identify:

1. Factual errors or hallucinations in either response.

2. Reasoning gaps or unsupported claims.

3. What each response gets right that the other misses.

4. The single strongest version, with specific edits.

Be direct. Don't hedge.

Use ChatGPT and Claude as A and B, then run the judge in whichever you trust more for that task type. The whole loop takes about 90 seconds.

Total AI beginner?

Start here

(

goes with this video

).

Have a specific skill you want to learn?

Request it here.

🍪 Treats to Try

Cursor

shipped a TypeScript SDK so you can build, steer, and compose custom programmatic coding agents using the same harness as Cursor itself, with repo context, MCP tools, and auto-PR workflows (

cookbook here

) —free with Cursor.

Mistral

released Medium 3.5 (a 128B model with 256k context), remote coding agents in Vibe that ship GitHub PRs asynchronously, and a new Le Chat Work mode for multi-step tasks like inbox triage —free trial.

Agent-S

gives you scheduled agents on their own always-on virtual computer that keep state across sessions, stay logged into 1000+ apps, and hand off work like inbox management or vacation booking —$10-20 a month.

The Gemini app

can now generate downloadable Google Docs, Sheets, Slides, PDFs, Word, Excel, and CSVs directly from prompts, so you can go from brainstorm to shareable file without leaving the chat —free with Gemini.

AI2's MolmoPoint and MolmoWeb

extend the open Molmo family with precise visual pointing, screen grounding, and screenshot-based web agents that navigate using mouse and keyboard, beating larger proprietary models on key benchmarks —free, open source.

gives restaurants the same online ordering, marketing, and Google-ranking tools national chains use, with an AI that diagnoses why you're losing online sales and what to fix —no pricing details.

🎙️

The AI music tool Google just bought is actually wild

This week, Corey sits down with Kendall Rankin from Google to put

Flow Music

(formerly Producer AI, Google Labs' Lyria 3-powered music platform)

through its paces. They generate a garage rock song about AI from a single prompt and iterate it with conversational edits so you can see exactly how the platform works.

Click the image above to watch on YouTube!

They build a custom instrument inside a "space." Then they one-shot a music video featuring a humanoid robot in vintage French wine poster style. They also dig into SynthID watermarking and why AI music tools work best as amplifiers, not replacements.

Listen / Watch on

YouTube

|

Spotify

|

Apple podcasts

Special thanks to our sponsor,

Dell

, for this one!

📰 Around the Horn

Where have I seen this before…

oh, I know!

House committees

opened probes into Airbnb and Anysphere (Cursor's parent) over their use of Chinese AI models like Moonshot's Kimi and Alibaba's Qwen, citing national-security risks from data sharing with PRC-linked labs.

Claude Mythos Preview

found 271 zero-day vulnerabilities in Firefox 150 with Mozilla; Mozilla called the number "extraordinary."

Mayo Clinic researchers

built an AI system that spots pancreatic cancer on routine CT scans an average of 475 days before formal diagnosis.

Anthropic

released Introspection Adapters, a single LoRA technique that lets fine-tuned LLMs verbally self-report hidden behaviors for misalignment detection.

Seven families

sued OpenAI and Sam Altman over February's Tumbler Ridge mass shooting, alleging negligence in failing to alert police about the suspect's months of ChatGPT activity.

Want everything that happened in AI today? Click here.

FROM OUR PARTNERS

The part of your finance team's day that shouldn't require humans

Logging into portals. Copying data between systems. Tracking down records across six different websites.

Woodrow

handles the repetitive browser work your team does every day — so they can focus on the work that actually requires their judgment.

See how it works

Want absolutely EVERYTHING that happened in AI this week?

Click here!

🧩 Thursday Trivia

You know the drill: one is AI, and one is real. Which is which? Vote below!

A.

B.

|

|

|

A Cat’s Commentary

Trivia answer:

A

is AI,

and

B is real

.

|

|

P.S:

Before you go… have you

subscribed to our YouTube Channel

? If not, can you?

Click the image to subscribe!

P.P.S:

Love the newsletter, but only want to get it once per week? Don’t unsubscribe—

update your preferences here

.