AI's Potential And Limitations In Chip Design

Experts at the Table: Semiconductor Engineering sat down to discuss the opportunities and challenges of using AI in chip design, with Thomas Andersen, vice president for AI & Machine Learning at Synopsys; Sridhar Boinapally, senior director of analog/mixed signal tools/flow at Intel; Alex Starr, corporate fellow at AMD; Stuart Oberman, vice president for GPU hardware engineering at Nvidia; Silvian Goldenberg, partner and general manager for silicon engineering infrastructure at Microsoft, and Borivoje Nikolic, professor of electrical engineering and computer science at the University of California at Berkeley. What follows are excerpts of that panel discussion, which was held in front of a live audience at the recent Synopsys Converge conference.

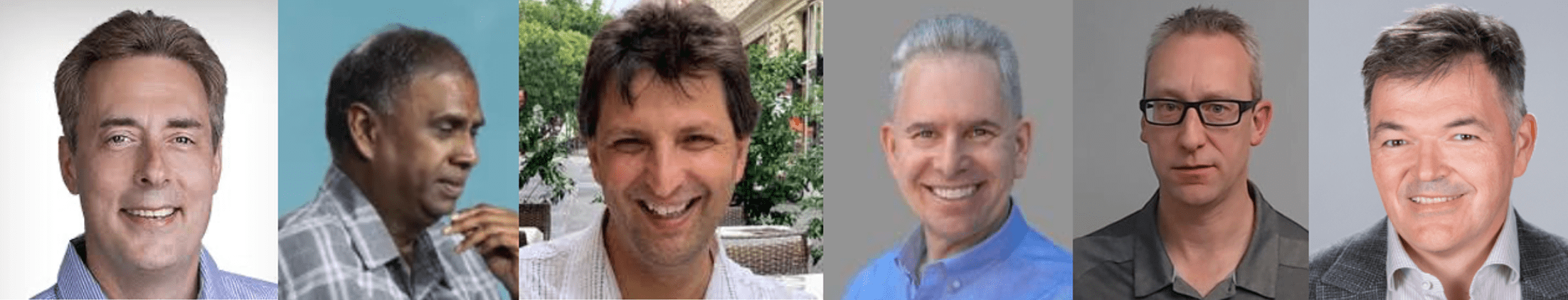

L-R: Synopsys’ Andersen; Intel’s Boinapally; Microsoft’s Goldenberg; Nvidia’s Oberman; AMD’s Starr; UC Berkeley’s Nikolic.

SE: How do you see AI fundamentally changing the chip design process over the next five years?

Andersen: It will not replace the tools themselves. I don’t see any technology that would be better. However, for the operator of the tool — the human who runs the verification tools, implementation tools, signoff tools — there are a lot of iterations happening. Part of it is refining the design, part of it is debugging and setting up your workflow. That type of work will be highly automated in the next two years, probably, and that’s where generative AI and advances in reasoning models have made a huge leap. I can automate workload generation, debug, verification problems, physical design problems, and it can accelerate my design closure quite a bit. The part that will take a little longer is complete automation, because with what exists today, it’s important to have a human in the loop to make sure that I don’t make mistakes and to double check that the system does what it’s intended to do. But in general, I do see where there is huge opportunity, and there will be quite a bit of disruption in the chip design flow in the coming years.

Starr: Five weeks or five years? Things are moving so fast. The rate of change, the acceleration of change, and the creation of models and agentic flows are going to completely transform the way we do silicon design. There’s going to be a real learning and skills development component to the engineering workforce. As we uplevel, orchestrate, and understand what’s going on in these systems, we’ll get through so much more in shorter time to market.

Oberman: My boss (Nvidia CEO Jensen Huang) famously said, ‘There’s task work, and then there’s the human oversight work.’ Where possible, use AI to completely consume the task work. So in terms of the chip design process, for any of the things we’ve engineered over the years, we’ve gotten very good at doing certain tasks. That includes parts of analysis, certain parts of coding, and parts of verification and debug. For the most part, those are tasks. But it’s crazy how fast the models are changing, whether it’s five weeks or five years. It would be nice if science would slow this down a little bit to give us time to keep up with the rate of innovation. We see so much technology becoming available to us, and we’re trying to deploy it as fast as we can.

Boinapally: This is a fundamental change for EDA. A lot of design is mundane work, where you have to do it over and over again in verification. All of that is gone. We are moving up in the value chain, going to a higher level in design.

Goldenberg: We have to get it right from day one. That’s how chip design works. It’s extremely expensive, in time and money. We have to maintain control from day one to the day we tape out. That’s the context in which we are applying AI technology. Silicon development is still going to be hard. Designers are still going to have to understand what is happening. The value of AI is how you go faster, and how you blur the lines between specialties. Right now, we have specialized engineers for different elements. This allows for more diversification of knowledge. That is what is going to lead to a huge amount of designs. Design houses go linear, and then they go back, and that’s what costs us a lot of time.

SE: What do you tell engineering students?

Nikolic: Don’t be afraid. These agentic AI approaches will enable a future where we will see many diversified chips. The time it takes to develop chips will be shortened by the tools. Those are obvious things that are going to happen in the next few years. The biggest thing that makes people run or hide from AI is going to be in the things we discover. We put out a paper that AI has discovered a new cache replacement policy in general-purpose cores that beats human development. We also built an AI discovery flow to develop analog circuits. It did not quite invent new topologies, but it invented topologies that had been seen before but not used because they’re hard to reason about. So we need to educate students about AI and how to take advantage of all of this. We don’t have an answer for that at the moment, but we are really trying to figure that out.

SE: There’s a huge amount of investment going into AI these days. We’ve gone from machine learning to generative AI, and agentic AI now, and that’s been done in a span of four years. Where are the big opportunities, and how is this going to affect innovation in semiconductors?

Andersen: I’ve seen these graphs showing machine learning, AI, generative AI, but that doesn’t mean that generative AI is better than other techniques. There are different applications for different problems. For optimization problems, for even non-AI stuff, if I have a placement problem with millions of objects that I want to optimally place, it doesn’t mean that an AI or LL algorithm is better at this. AI is all about automating what a human does. It’s good at cognitive things and recognizing things, maybe even running things. From that perspective, different techniques will be applied to different problems. In core EDA algorithms, there have been attempts with reinforcement learning to come up with better solutions, but that hasn’t really panned out, so we’re focusing on tasks that a human has to do. Number one, the human is going to have to be in control for quite some time, because otherwise we’ll build a lot of chips that don’t work if we try to automate everything. I would start with smaller tedious tasks, like debugging things, cleaning up constraint files, but not the whole workflow or the spec, or we may end up with things that don’t work. It’s like images being created where people have six fingers instead of five. Things are moving very fast. We might need to slow down a bit and focus on solving the tedious debug tasks first before we go to the next level. There is huge investment, and huge potential, but we also have to be practical.

Goldenberg: One area where we are starting to see a lot is hardware utilization of software. That isn’t explored enough today because of how we do design work. We design the architecture, and then the model. AI has the ability to shape the software and the implementation for a more optimal solution than what we see today. That’s one area where we definitely see something. But you need to be able to depend on AI 100% for chip design.

Boinapally: The obvious places where we see it will be in RTL code generation, verification, validation. In the future it will be across every aspect of chip design. At Intel we are seeing very positive signs. It may even take over all of chip design. I’m not so sure that going forward a human needs to be in control to ensure that what is automated is verified. Human beings are not necessarily better. They make lots of errors. Yet today they verify things all the time. Maybe at the highest level we will have fewer people.

Starr: We will get to fully autonomous. We will get to the point where we have solutions that have different agents that verify what the human would look at, so you’re not totally relying on one model to do the right thing. You will have collections of agents working on things together. So we will be going from the coding that we do today to enterprise-grade, fully scalable, deployable, robust, deterministic solutions — but there’s a step there.

SE: What differentiates one chip from another if these are push-button designs?

Starr: What we’re seeing is the emergence of people who can get lean frameworks to solutions faster. There’s a huge difference between teams with exactly the same tools taking different approaches at different levels of abstraction. Teams are really able to understand what AI is, where it’s going, and what techniques are available to get that differentiation.

Oberman: You have access to the same underlying frameworks and tools, so what’s going to make the difference? Isn’t that already the case. You have access to engineers who are very well trained. You can interview them. You know their capabilities. You’ve got great EDA tools. That’s happening today. Yet companies are not putting out the same products. Being able to codify some of that expertise, whether it’s based on the skilled engineers that you brought in, based on the institutional expertise, and then embed them into the models themselves, or the frameworks — that will continue. Some will succeed more than others. We’re not at the point where it’s, ‘Stuff in, push button, chip out.’ Even if that were true, what kind of tooling did you put into that flow?