Representational drift under spontaneous activity - self-organized criticality enhances representational reliability

Abstract

Neural systems face the challenge of maintaining reliable representations amid variations from plasticity and spontaneous activity. In particular, the spontaneous dynamics in neuronal circuit is known to operate near a highly variable critical state, which intuitively contrasts with the requirement of reliable representation. It is intriguing to understand how reliable representation could be maintained or even enhanced by critical spontaneous states. We firstly examined the coexistence of the scale-free avalanche in the spontaneous activity of mouse visual cortex with restricted representational geometry manifesting representational reliability amid the representational drift with respect to the visual stimulus. To explore how critical spontaneous state influences the neural representation, we built an excitation-inhibition network with homeostatic plasticity, which self-organizes to the critical spontaneous state. This model successfully reproduced both representational drift and restricted representational geometry observed experimentally, in contrast with randomly shuffled plasticity which causes accumulated drift of representational geometry. We further showed that the self-organized critical state enhances the cross-session low-dimensional representation, comparing to the non-critical state, by restricting the synapse weight into a low variation space. Our findings suggest that spontaneous selforganized criticality serves not only as a ubiquitous property of neural systems but also as a functional mechanism for maintaining reliable information representation under continuously changing networks, providing a potential explanation how the brain maintains consistent perception and behavior despite ongoing synaptic rewiring.

Introduction

The neural system performs complicate tasks under rich spontaneous dynamics [

1

,

2

]. Instead of being simple background noise during a task, spontaneous neural activity may be of functional significance. For instance, it may serve as a prior and generate the prediction for the incoming signals to optimize the task implementation [

3

,

4

]. However, most studies that quantify long-term neural coding have focused on stimulus-evoked states, giving comparatively little attention to how the statistics of the spontaneous dynamics shape representational structure over time. One example is the recent studies about the stimulus-evoked neural representations over a long timescale. Traditionally, one would expect that the neural representations to the same input signals tend to be identical. Recent experimental studies focusing on stable task performance over a long timescale have revealed that neuronal representations for identical input signals exhibit significant variability but also share certain reliability across sessions [

5

–

7

]. First, data from various brain regions have confirmed a robust phenomenon called representational drift, where the preferences of single neurons change over time and the neuronal firing patterns of the circuit show weak correlations across sessions [

8

–

10

]. Second and more importantly, the correlations between the representation of multiple signals are relatively reliable across sessions [

8

], and in human V1 such relationships can remain approximately invariant to the interval between few weeks [

11

], suggesting a potential mechanism for extracting reliable task information from population neural activity despite its variability on the neuronal level response to a fixed signal. Theoretical studies proposed that such representational drift may reflect the redundancy of the task-solution space in the presence of noise, where there could be multiple networks to achieve the same task or similar neural representation [

12

,

13

]. Yet, whether and how the non-trivial spontaneous dynamics actively influence this long-term representational organization remains largely untested.

The spontaneous dynamics could influence neural representation through the synaptic plasticity. Synaptic plasticity [

14

,

15

], while is crucial for adaptation and learning, inevitably modifies the network structure according to the complex and variable spontaneous firing patterns and could disrupt the established neural representations in a circuit. Thus, representational drift can be attributed to the inevitable perturbation of the synapse connections induced by synaptic plasticity from spontaneous activity. On the other hand, if spontaneous dynamics constantly trigger synaptic updates, how do neural populations retain stable relational structure? This yields a central question: does plasticity induced by spontaneous activity merely drive drift, or can it, counterintuitively, also support reliability by constraining changes to subspaces that preserve low-dimensional structure?

In particular, the spontaneous dynamics of neuronal activity exhibit non-trivial features including scale-free avalanches [

16

,

17

]. Scale-free avalanches are cascading events characterized by powerlaw distributions in event size and event duration. Scale-free neural avalanches are remarkably universal, having been observed across diverse brain regions and species [

18

–

20

]. Such observations align with the concept of self-organized criticality (SOC) [

21

,

22

], wherein neural systems autonomously tune their states to criticality near a phase transition. Experimental support of SOC includes in vivo studies demonstrating that mouse visual cortex gradually restores critical dynamics following monocular deprivation [

23

]. One potential mechanism for the SOC in the neural system is the homeostatic plasticity [

15

,

22

], which regulates the network to achieve a stable state over time despite changes in external conditions or internal activity levels. Various computational models incorporating the homeostatic plasticity rule have successfully replicated SOC phenomena [

23

–

25

]. On the other hand, critical neural dynamics is inherently highly variable and exhibits sensitive response to small perturbations, as predicted by the critical branching model [

26

,

27

] and demonstrated in experimental data [

28

–

32

], apparently at odds with the requirement for reliable responses. This further deepens the conflict between the spontaneous criticality sustained by the plasticity and long-term reliable response. The dilemma of reconciling neural response sensitivity and reliability has been addressed by recent studies revealing that the critical neural avalanches in the excitation-inhibition (E-I) balanced networks (without synaptic plasticity) exhibit reliable low-dimensional representational pattern [

33

] and reduced trial-to-trial variability [

34

] in the presence of external inputs. However, it is unknown if this model could display SOC in the presence of homeostatic plasticity and whether its response patterns to external stimuli are still reliable amid the constant network structural changes induced by the plasticity.

In summary, how the highly variable spontaneous critical dynamics induced plasticity affects the neural representation as observed in the experimental data is still an open question. Does the critical spontaneous neural dynamics only induce the representational drift, or could it also contribute to the reliability against/admit the drift? Exploring this question is of fundamental importance for understanding the functional role of spontaneous neural activity.

Here, we address this gap by jointly analyzing spontaneous and evoked neural activities in the same cortical circuits and by building an E-I spiking network model endowed with homeostatic inhibitory plasticity. We first revisited and examined experimental data where the representational drift was observed [

8

] and we demonstrated that its spontaneous state exhibits near-critical features. Using an E-I network incorporating homeostatic plasticity specifically at inhibitory (to excitatory) connections [

35

], we showed that the model self-organizes to critical spontaneous dynamics and successfully recapitulates key experimental observations from in vivo visual cortex recordings [

8

] - specifically the phenomena of representational drift and representational reliability manifested by restricted representational geometry of multiple signals. We found that homeostatic plasticity under the variable spontaneous activity confines the network responses into a restricted subspace, while a randomized shuffling plasticity rule disrupts both SOC and the constraint of the representational geometry. The neural dynamics operating near criticality demonstrate effective low-dimension representation compared to subcritical or supercritical regimes, which emerges from the relative stable inhibitory synapse pattern with the minimal variance near the critical state. Overall, our work provides a linkage of two actively explored fields in neuroscience, neural criticality in spontaneous states and representational drift and stability in evoked states, which are relatively independently investigated. By explicitly linking spontaneous criticality, plasticity and representational geometry, our results offer a unifying account of how reliable representation can coexist with continual synaptic change. This linkage thus provides a more unified framework towards neural circuit dynamics and information processing.

Results

Representational drift admits critical spontaneous activity

Neuronal circuits face a fundamental challenge: maintaining reliable representations of external signals despite ongoing synaptic rewiring under variable spontaneous activity. The typical spontaneous state is characterized by scale-free avalanches, which have been considered as evidence for the critical brain hypothesis [

16

]. We retested this hypothesis by evaluating neuronal avalanches from spontaneous neuronal activity recordings in the V1 region of mouse cortex (see

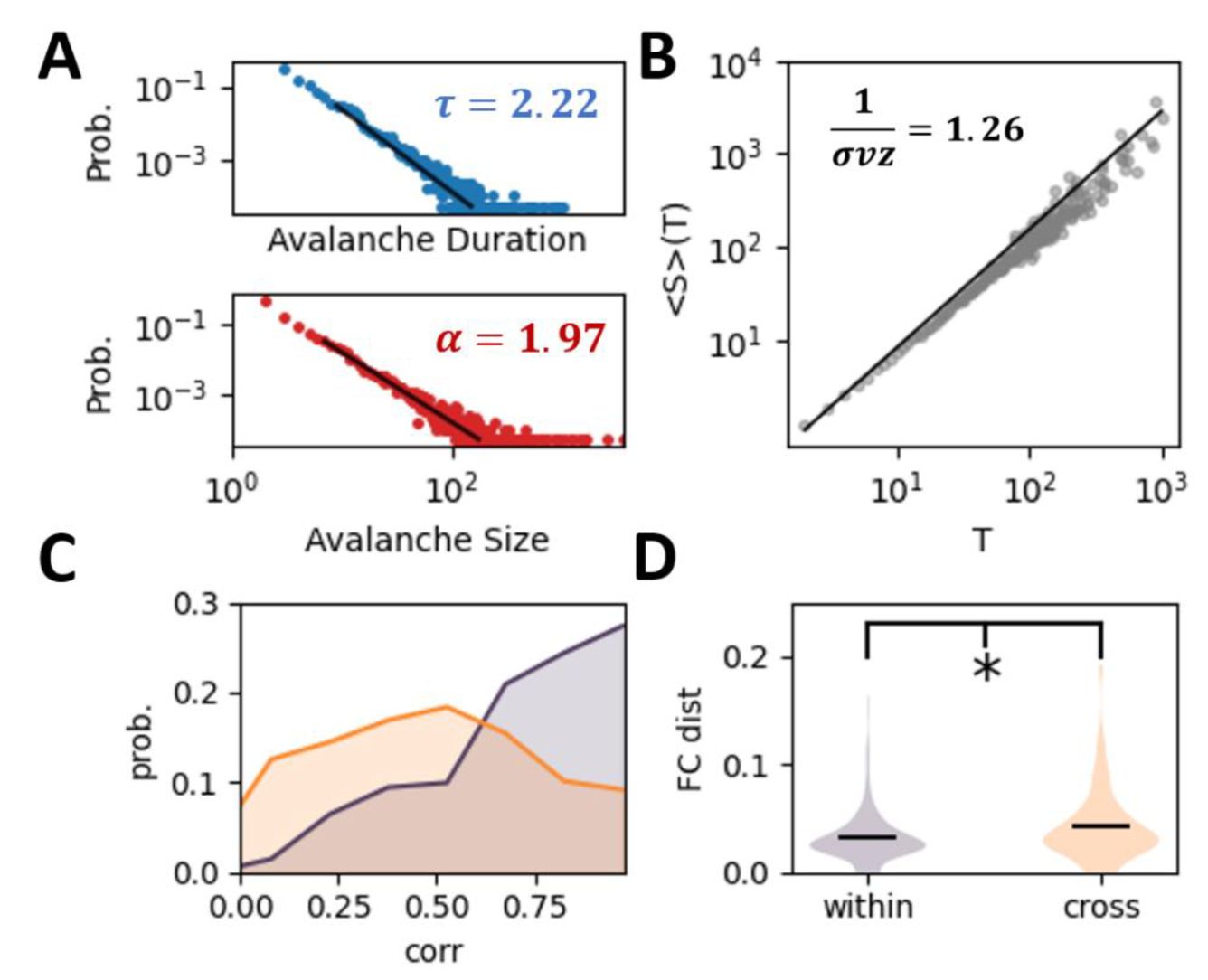

Methods

) across several sessions in in vivo experiments studying representational drift [

8

]. Both avalanche duration and size distributions follow power-law relationships (

Fig. 1A

), and the deviation from criticality coefficient (DCC), which represents the difference between observed exponent relations and those predicted by crackling noise theory (see

Methods

), is near zero [

17

,

23

]. These features indicate near-critical spontaneous dynamics in the mouse visual cortex across sessions, consistent with other experimental observations in the same brain region in the same species [

23

].

Variable spontaneous dynamics in the experimental data.

(A)

The power-law distributions of both avalanche duration and size of the experimental data in the spontaneous state. (

B

) Average avalanche size 〈S〉 given avalanche duration

T

. The relationship between the three power-law exponents aligns with theoretical predictions, i.e., DCC = |1/σvz − (

τ

− 1)/(

α

− 1)| = 0.05 . The black line refers to the theoretical prediction with the slope equal to (

τ

− 1)/(

α

− 1) .

(C)

The probability distribution of correlation of mean firing rate of neurons within-session (orange curve) and cross-session (olive curve). (

D

) The distance between the functional connectivity, i.e., correlation between the neuronal activity, within-session and cross-session (t- test,

p =

0.025, see Method for detail).

Criticality implies variable active states, demonstrated by a high susceptibility and entropy [

32

]. Here, we quantified the variability of activity patterns in the spontaneous state by evaluating the mean firing rate of neurons

v

∈

R

N

(

N

represents the number of neurons) across trials, and calculating the across-trial correlation of

v

within and across sessions. In experimental data, we confirm smaller cross-session correlations, indicating changing spontaneous activity patterns (

Fig. 1C

). We further evaluate the noise correlation in the spontaneous state of neurons, which has been considered to reflect the underlying structural connectivity pattern. The across-trial distance of noise correlation matrices within session is smaller than that of the cross sessions (t-test, p =0.02,

Fig. 1D

), which indicates the fluctuation of the underlying effective neuronal connectivity. To highlight the changing network structure, we directly estimate the effective connectivity (see Method) from the neuronal activity, where the within-session effective connectivity is more similar than the cross-session one (

Fig. S1

).

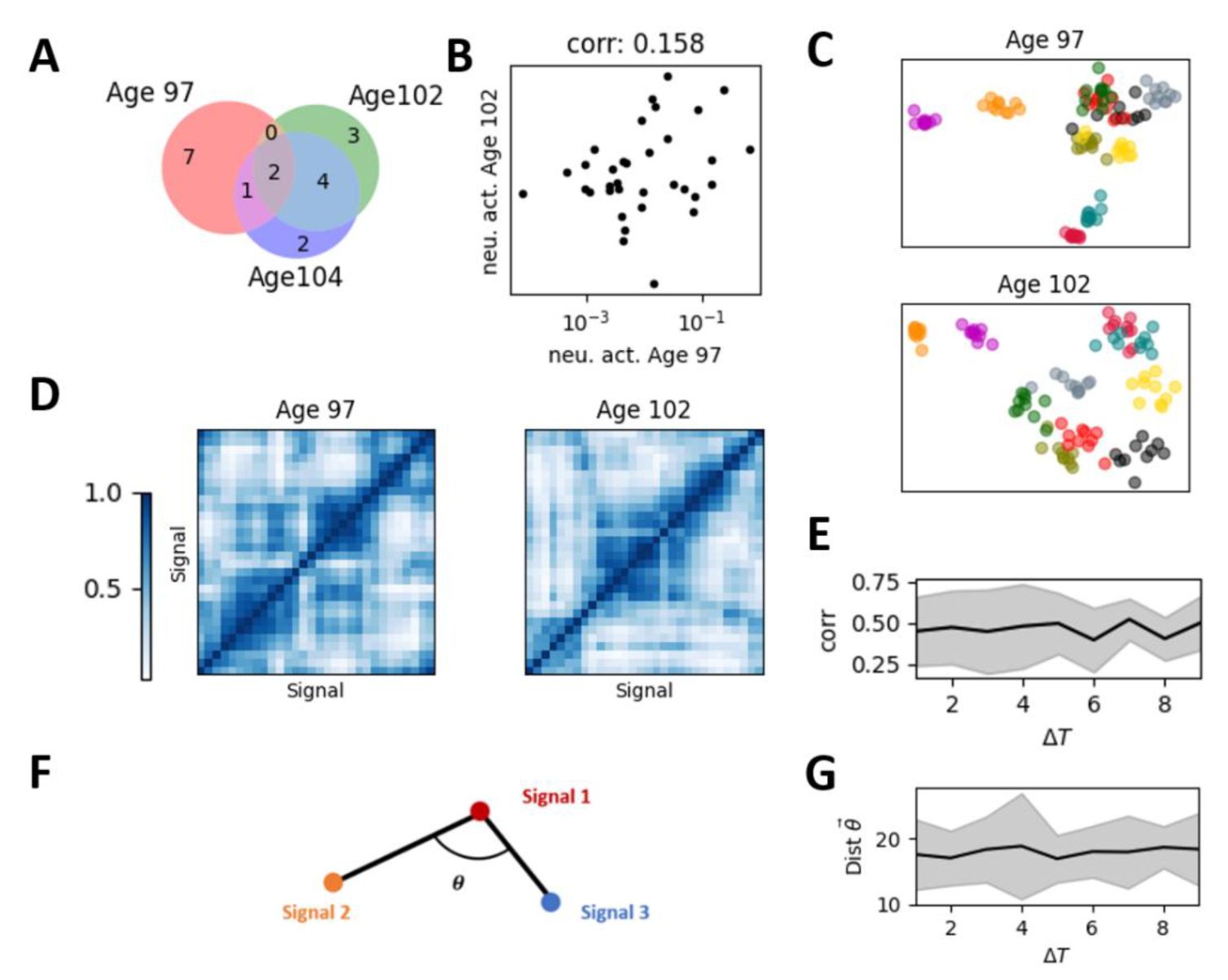

The key question is whether there are reliable response patterns from such highly variable critical spontaneous states to support the reliable behavior, especially with changing network connections across sessions due to plasticity. To answer this question, we began with a detailed analysis of the experimental data previously used to study representational drift [

8

,

36

]. We first examined singleneuron representations, generally considered unstable, by checking the consistency of active neurons for a given stimulus, defined as those with above-mean firing rates. For visualization, we used one mouse as the example to illustrate the following results, and checked the statistical significance in all experimental data (

Fig. S2

). As expected, we found that nearly half of active neurons change their activation across sessions, indicated by non-overlapping regions between differently colored circles (

Fig. 2A

). We next examined reliability at the population activity, i.e., how the combination of all neuron responses to the given stimuli, where considerable drift has also been observed. We evaluate the population activity by the mean firing rate in response to the given stimulus across trials, named as mean population response vector [

36

]. Consistent with previous results, we found that the correlation between mean population response vectors across sessions in the experimental data is quite small (

Fig. 2B

), indicating drift in representation of fixed signals.

Representational drift and the restricted representational geometry in the experimental data.

(A)

The set of active neurons for a given stimulus changed across sessions (days). Red, green, and purple dots represent active neurons on the corresponding sessions, with overlapping colors indicating neurons active across multiple sessions.

(B)

The correlation between the mean population response vector across sessions of the same signal.

(C)

The low-dimensional representation of the population response vector of different signals and different trials in two sessions (top panel and bottom panel, respectively). Each color represents a different stimulus, with individual points of the same color representing different trials of that stimulus.

(D)

The representation similarity matrix, i.e., the correlation matrix between mean population response vectors for all stimulus pairs within session.

(E)

The cross-session correlation of the representation similarity matrices across signals obtained on different sessions (e.g., the two matrices shown in

Figure 3D

) as a function of the temporal distance between these sessions.

(F)

Illustration of the representational geometry of population response with different stimuli (nodes), defined by the angles between edges of the mean population response, where each edge is denoted as difference between mean population response vectors of two signals (see Method). For reliable representational geometry across sessions, the angles between all the edges are supposed to be preserved.

(G)

Representational geometry undergoes subtle changes over time but remains confined within a restricted space, manifested by time-invariant drift distances.

Even though signal response patterns of a single stimulus turns to be variable, the structure of neuronal representations across multiple signals is reported to be relatively consistent [

8

]. We reproduced previous results showing that low-dimensional embeddings of mean population response vectors for different signals remain similar across sessions (see Method). As shown in

Fig. 2C

, the neural representation of two signals that are closer (like the blue and green clusters) on one session (top panel) may tend to remain closer on subsequent sessions (bottom panel). We quantified the reliability of neuronal representation structure of multiple signals using representation similarity matrices, which measure correlations between mean population response vectors of different signals within sessions (

Fig. 2D

). For each session, we obtained a representation similarity matrix, then calculated the correlation (or distance) between these matrices as a function of the time difference Δ

T

between sessions. This correlation typically takes value about 0.4, indicating a drift of representation. We found that, across-sessions, the change of correlations of representation similarity matrices is nearly independent of Δ

T

, consistent with findings in human visual cortex [

11

]. Intuitively, such drift can be considered as a rotation of lowdimensional representations as in

Fig. 2C

, where nodes (centers of the same-color clusters) change their positions across sessions (top panel and bottom panel), but their relative positions remain stable. To illustrate this overall rotation with stable internal structure and evaluate such relationship between the mean population response vectors of different signals within sessions, we characterize the geometry of the mean population response vectors using the angles between the edges linking mean population response vector pairs (see Method, and illustration in

Fig. 2F

) and found that representational geometry (distance of all the angles) remains restricted across sessions, as demonstrated in

Fig. 2G

.

In short, following established experimental measurements, we confirm that despite changes in both single-neuron and population response patterns over time, their representational similarity and representational geometry converge to a restricted space, as their changes are nearly invariant to the duration, which prevents the representations in the distant sessions becoming highly different.

These results provide direct evidence that representational drift and certain degree of reliability in the evoked states coexist with the critical spontaneous activity of the same circuit. Next, we investigated using modeling how these two pronounced aspects of neural dynamics with and without stimulations can be reconciled and whether the critical spontaneous activity plays nontrivial roles.

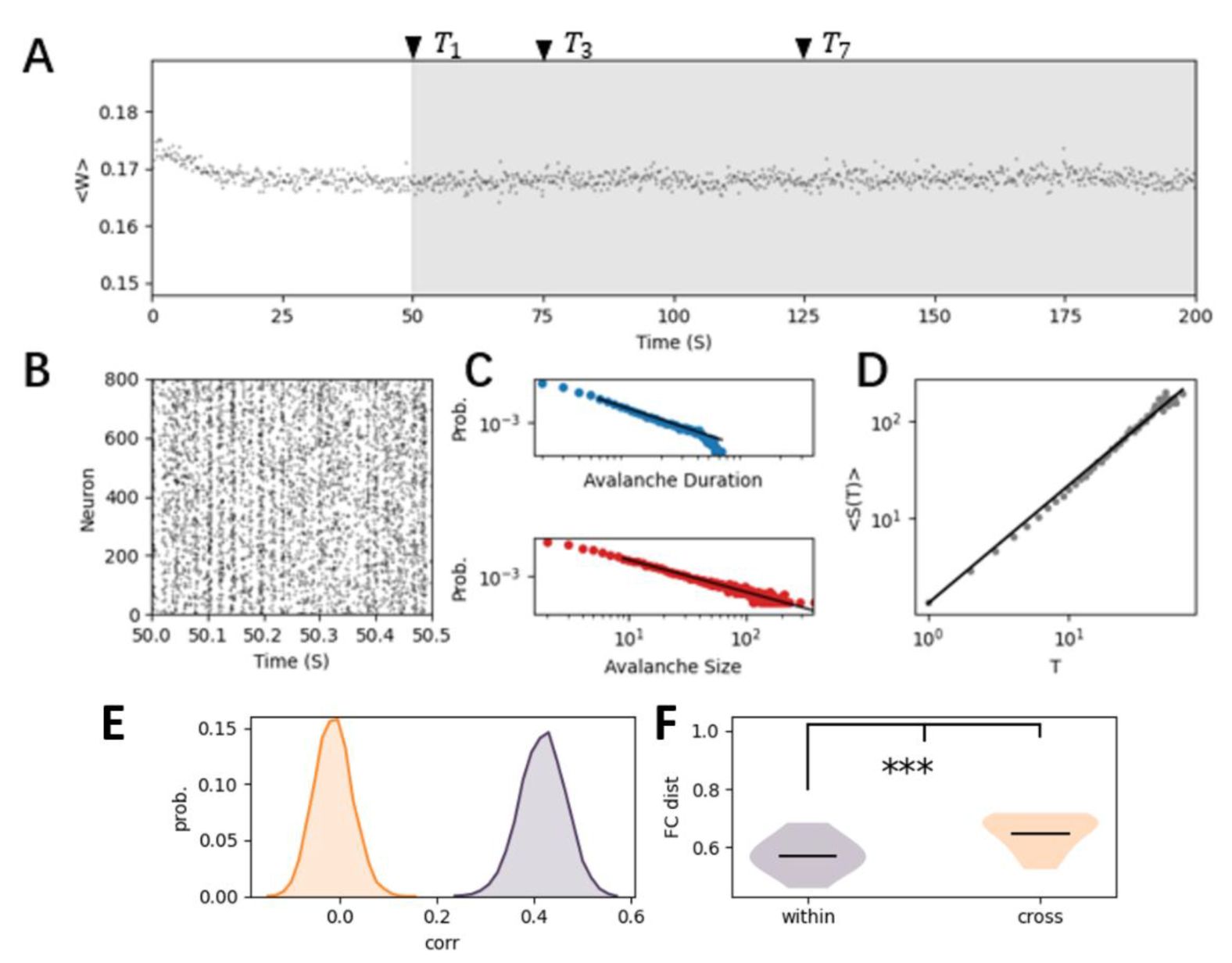

Self-Organized Criticality and Representational Drift Emerge through Homeostatic Plasticity

To study how these spontaneous dynamics may influence the signal representation in the changing networks (

Fig. 3

), we first needed to build a biologically realistic network model that captures essential dynamic features. As experimental data suggested changes of the underlying network connectivity across trials, we assumed plasticity is involved. Therefore, we used a biologically plausible homeostatic plasticity rule which has been explored in previous computational study [

35

,

37

], and developed an excitation-inhibition network model incorporating this plasticity rule at connections from inhibitory to excitatory neurons. This inhibitory spike timing-dependent plasticity (iSTDP,

equation 2

in Methods) regulates the synaptic strength (

Fig. 3A

), and constrains the background noise-driven network from a random initialization of connectivity to a relatively stable configuration with small fluctuations in connectivity weights (

Fig. 3A

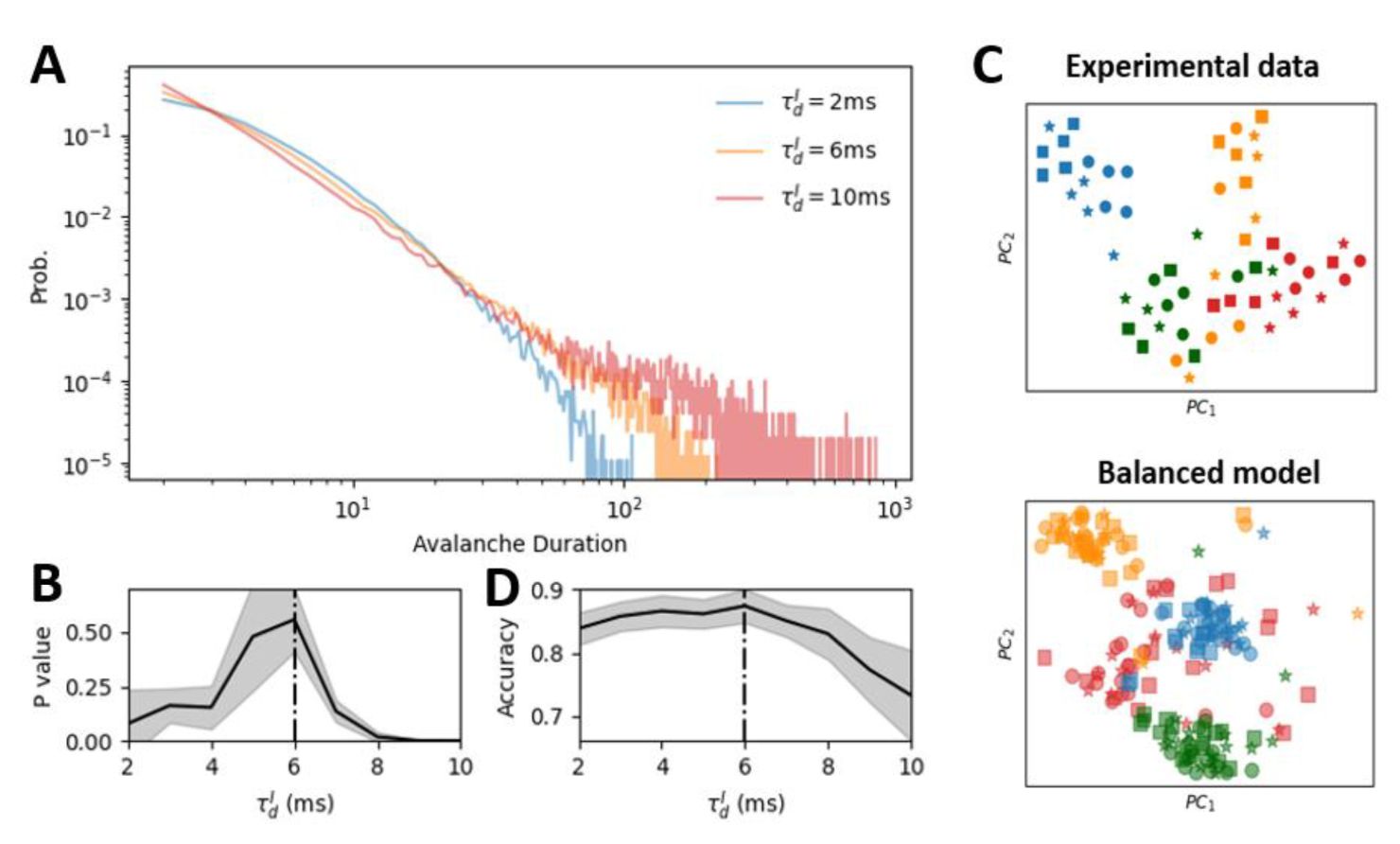

). The spontaneous dynamics self-organize into a critical state, characterized by power-law distributions of avalanche size and duration (

Fig. 3C

), with the relationship between these exponents predicted by the crackling noise theory (

Fig. 3D

). Similar to previous work [

35

], the self-organized criticality (SOC) mechanism in our model produces a critical line in the model parameter space where initial inhibitory synaptic strength does not influence criticality, while changing the inherent timescale of inhibitory synapses results in phase transitions, as we will discuss in the subsequent sections.

The self-organized criticality (SOC) in the E-I network with homeostatic plasticity.

(A)

The synaptic strength dynamics of a randomly initialized network with homeostatic plasticity, converged to a relatively stable state characterized by a nearly constant mean synapse strength from inhibitory neuron to excitatory neuron, as shown in the grey region. To study representational drift in this model, we selected network configurations at several time points ({

T

i

}

i=1,2..10

) within this stable state. These time points are referred to sessions in experimental data.

(B)

The raster plot of neural activity in the stable state.

(C)

The near power-law distribution of the avalanche duration (blue) and avalanche size (red), indicating that the homeostatic plasticity organizes the neural dynamics into the (near) criticality.

(D)

The relationship between the average avalanche size with respect to avalanche duration follows the theoretical prediction (DCC = |1/σvz − (

τ

− 1)/(

ρ

− 1)| = 0.04 ± 0.016).

(E)

The probability distribution of correlation of mean firing rate of neurons within (olive curve) and across (orange-curve) sessions.

(F)

The distance between the functional connectivity, i.e., correlation between the neural activity, within and cross-session.

Our plasticity model reproduces this variable critical spontaneous pattern, and the underlying fluctuations in network connectivity due to plasticity under critical spontaneous activity with background noise (

Fig. 3 E, F

, and

Fig. S1

). Networks with identical connectivity (mimicking same session in experimental data) naturally produce more similar activity patterns than those with changed connectivity patterns cross-session. Overall, we demonstrated that the variable spontaneous dynamic features in our SOC model are qualitatively consistent with experimental data.

We next study signal representation in our SOC model. We set the encoded signal as a 6 Hz frozen Poisson spike train for each neuron, with different signals corresponding to different stochastic samples of the Poisson signals (see

Methods

). We compared network configurations at different time points (T1, T2 in

Fig. 3A

) after convergence to SOC to simulate neuronal networks on different sessions. We then introduce different frozen Poisson signals into the networks of different sessions, each for 100 trials, and record population response vectors for each trial and signal at each session.

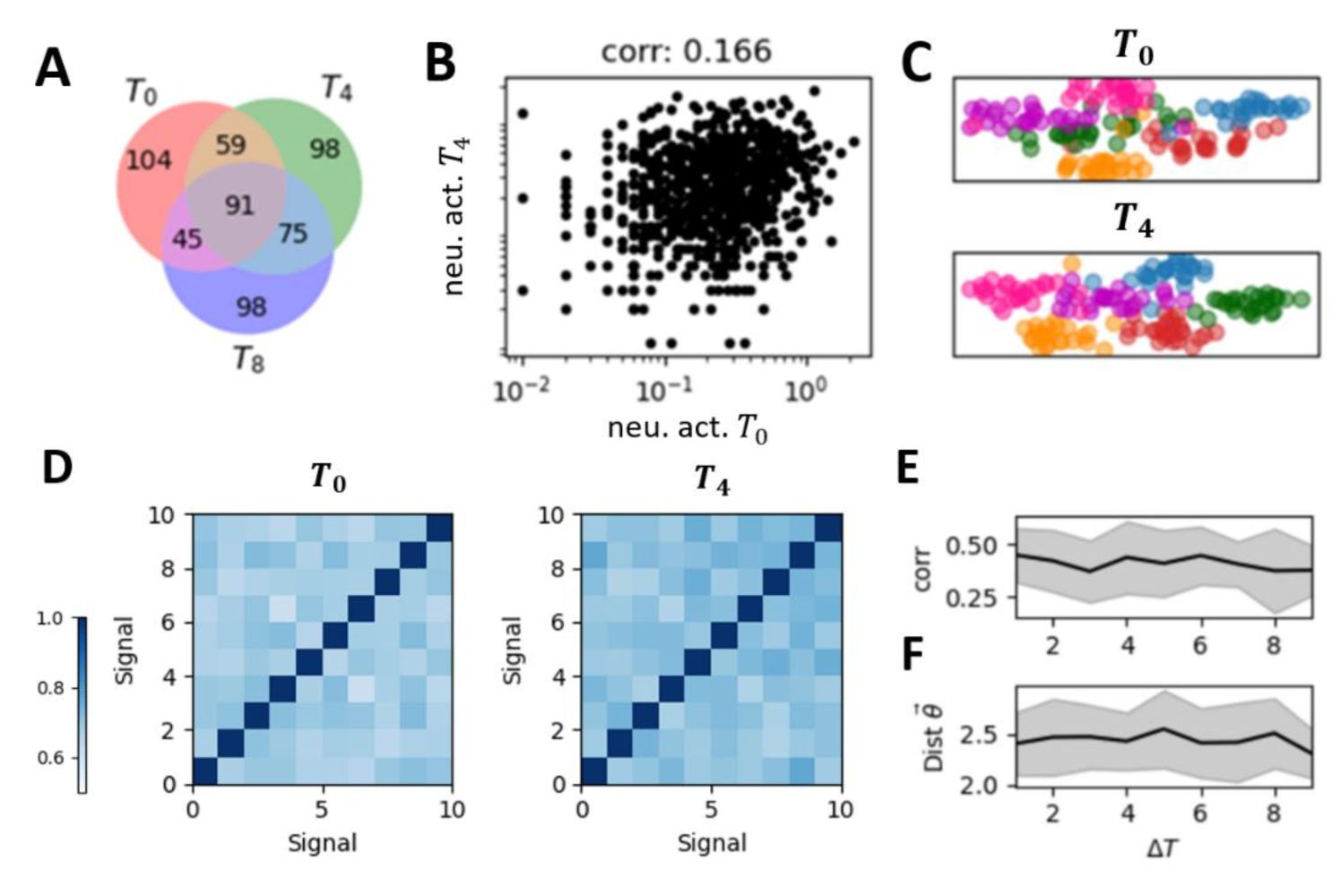

We performed the same analyses used for experimental data, with results shown in

Fig. 4

. Despite changing connectivity patterns induced by the interplay between the plasticity rule and spontaneous dynamics, these networks share common response dynamics properties similar to the experimental data: variable representations across sessions at both individual neuron and population response (

Fig. 4A-C

), and the restricted representational geometry (

Fig. 4D-E

).

Representational drift and the restricted representational geometry in the self-organized critical (SOC) model.

(A)

The set of active neurons for a given stimulus changed across sessions. Red, green, and purple dots represent active neurons on the corresponding sessions, with overlapping colors indicating neurons active across multiple sessions.

(B)

The correlation between the mean population response vector across sessions of the same signal.

(C)

The low-dimensional representation of the population response vector of different signals and different trials in two sessions (top panel and bottom panel, respectively). Each color represents a different stimulus, with individual points of the same color representing different trials of that stimulus.

(D)

The representation similarity matrix, i.e., the correlation matrix between mean population response vectors for all stimulus pairs.

(E)

The cross-session correlation of the representation similarity matrices across signals obtained on different sessions (e.g., the two matrices shown in

Figure 4D

) as a function of the temporal distance between these sessions.

(F)

Representational geometry undergoes subtle changes over time but remains confined within a restricted space, manifested by time-invariant drift distances.

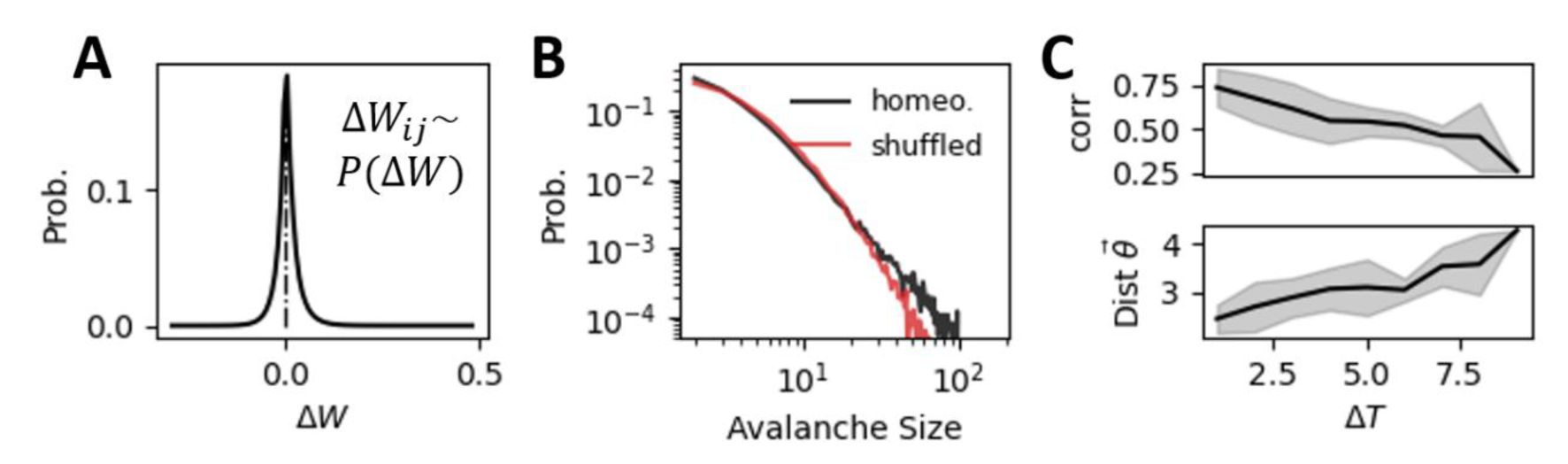

Although plasticity is driven by the spontaneous dynamics, the resulting changes of synapse strength are not random. Since the homeostatic plasticity rule regulates excitatory neuron’s firing rates to given values, connection strengths from inhibitory neurons to highly active neurons are more likely to be enhanced rather than reduced, which constrains the freedom of synapse strength

W

. To examine how homeostatic plasticity differs from random plasticity in terms of signal representation, we obtained the distribution of

ΔW

i,j

from numerical simulations of selforganized networks after dynamics convergence to SOC (gray region in

Fig. 3A

), as shown in

Fig. 5A

. We then built a network model with randomly shuffled plasticity where the individual synaptic strength change at each time step is randomly sampled from the

P(ΔW

ij

) distribution rather than being determined by neural spike timing. Such random plasticity shifts the network to a slightly subcritical state from the original critical state, where neural avalanche size deviates from power-law distribution (

Fig. 5B

). We found that the representational drift accumulates with sessions, the representational similarity reduces continuously and the representational geometry is no longer restricted (

Fig. 5C

). In summary, we showed that SOC induced by homeostatic plasticity can maintain reliable representations of external signals despite representational drifts.

Random shuffled plasticity biases the network from criticality and breaks down restricted representational geometry.

(A)

The distribution of synaptic weight changes

(ΔW

ij

) obtained from numerical simulations of the self-organized critical network with homeostatic plasticity (as described in

Figure 2A

). To create the “random shuffled plasticity” condition, we replaced the original homeostatic plasticity rule with random sampling from the empirically observed

ΔW

ij

distribution. Each connection was updated independently using this distribution.

(B)

The shuffled plasticity shifts the network into a slightly subcritical regime compared to the near-critical dynamics observed with homeostatic plasticity. This shift is further supported by an increase in DCC value for the shuffled plasticity network compared to the self-organized critical network with homeostatic plasticity (

DCC

homeo

= 0.040 ± 0.016, DCC

shuffled

= 0.072 ± 0.022

).

(C)

The randomly shuffled plasticity disrupts the restricted representation geometry observed with homeostatic plasticity. This is evidenced by a reduction in representational similarity across time (upper panel) and an increase in the distance between representational geometries (lower panel).

Enhanced Neural Representational through Self-Organized Criticality and its Network Mechanism

Self-organized criticality implies that the network model evolves into the critical state independent of the initial inhibitory strength distributions. However, the dynamics of the model and the phase transition can still be determined by several control parameters. Here, we set the timescale of inhibitory synapses

as the control parameter, following previous studies [

33

,

38

] . For a given

, starting with an arbitrary initial inhibitory connectivity strength distribution, the network with homeostatic plasticity will evolve to its stable dynamic state. We demonstrated that increasing parameter

induces a clear phase transition, as shown in

Fig. 6A

and

Fig. S3

, from an asynchronous subcritical state (

Fig. S3

, left panel) to a synchronous supercritical state (

Fig. S3

, right panel), through a critical state characterized by intermediate level of sparse synchrony (

Fig. S3

, middle panel) and its avalanche duration distribution can be best fitted by a power-law distribution (

Fig. 6B

).

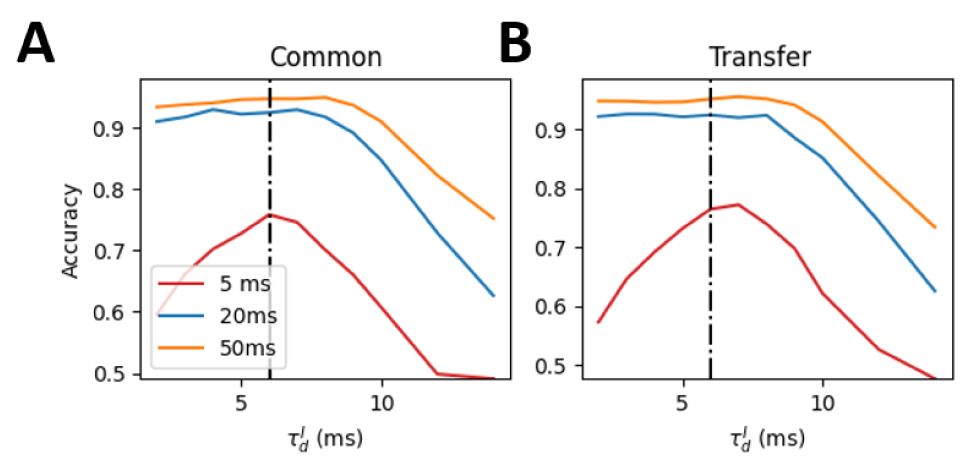

Self-organized critical (SOC) state maximizes cross-session low-dimensional representation reliability.

(A)

The distribution of avalanche durations for three representative values of

.

(B)

The p-value obtained from comparing each avalanche duration distribution to the optimal power-law distribution. A higher p-value indicates a closer fit to the power law, suggesting closer proximity to criticality. The network with

= 6

ms

(black dashed line) exhibits the closest fit to the power law, indicating a near-critical state.

(C)

Low dimensional representation of the linear discriminant analysis (LDA) results for four example stimuli (represented by different colors). Circles, stars and squares denote the trails of three different sessions. The close alignment of markers for the same stimulus cross-session indicates that the LDA decoder successfully captured stimulus-relevant features that are invariant to session-to-session variability. The upper and low panels show the results for the experimental data and model simulation, respectively.

(D)

The performance of the LDA decoder as a function of

in the model. Decoding performance was quantified as the classification accuracy. The SOC state (

= 6 ms) exhibited the highest cross-session decoding performance. The shade area in (B) and (D) represents the standard deviation across 10 network realizations.

The mechanism underlying this phase transition can be understood as follows: increased

prevents inhibitory currents from promptly canceling excitatory currents, creating a time window for neurons to fire together and having a higher chance to generate a more strongly synchronous activity under a given excitability. Such co-spiking between neurons later produces substantial increases in inhibition strength, which then inhibits a large population of neurons below their target firing rates and network becomes silent for a short duration (

Fig. S4

). Due to background input, the neurons gradually spike and reduce the inhibition strength. Once the excitability of network returns near the target value, then the neurons will have larger chance to co-spike. This co-spike will again cause a cyclical activity pattern. When

is small, the inhibitory current can quickly counteract the excitatory current with few neurons, thereby maintaining the network’s excitability close to the target value without inducing network-level collective activity. Only when

is moderate (e.g., 6 ms), the network converges to the target excitability, exhibiting sparse synchrony near the transition from asynchrony to full synchrony

To directly evaluate neural representation across sessions, we use linear discriminant analysis (LDA) (see

Methods

) to train a common decoder to identify each signal by using response activities of the same signal from different sessions. Generally, LDA returns a discrimination vector for each signal; we added another biologically reasonable constraint to make these discrimination vectors nonnegative. By projecting activity onto the discrimination vectors, we showed that the common decoder can captures activity features of the same signal across networks of different sessions in the experimental data (

Fig. 6C

, the decoding accuracy for all subjects in experimental data is

0.61 ± 0.15

). In

Fig. 6C

, points with the same color are results for the trials of the same signal and different shapes represent different sessions. Importantly, the decoding accuracy in the model peaks near the critical state (

Fig. 6D

). We further examined the results when the input stimuli have different durations, and also tried different decoding schemes, including common decoder and transfer decoder (see

Methods

). The critical state robustly outperforms subcritical and supercritical states (

Fig. S5

) in all cases. This result indicates that the criticality enhances the cross-session lowdimensional representation reliability.

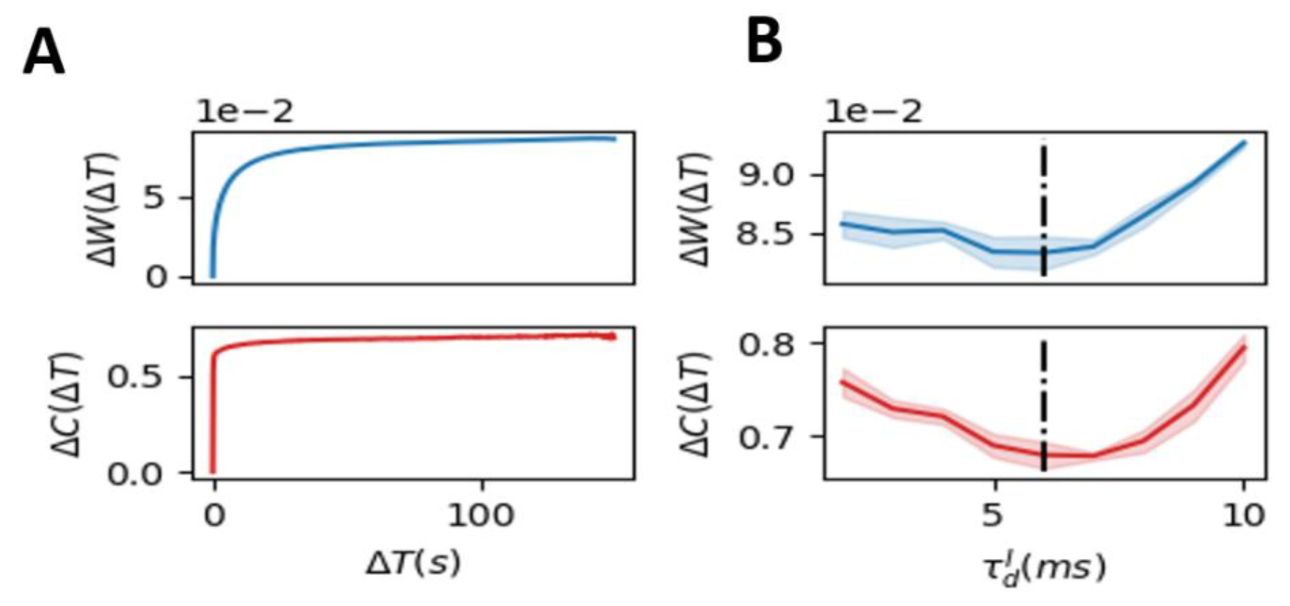

We next investigated the evolution of inhibitory synapse by examining changes in synapse strength patterns and indegree patterns during the self-organizing process in the presence of the homeostatic plasticity. The synapse strength patterns refer to the average strength of all inhibitory synapses, while the indegree pattern refers to the average total inhibitory synapse weights of each excitatory neuron. Both changes of synapse strength (evaluated by the L1 distance between different sessions, see

Methods

) and indegree patterns converge to static non-zero values after a transient period (

Fig. 7A

), suggesting that homeostatic plasticity-induced connectivity structures show long-term stability, where the difference of connection between sessions separated by large time interval is similar to that by medium time interval, which shows the restricted representational geometry. In contrast, the randomly shuffle plasticity leads to the continuously increasing differences for both synapse strength and indegree pattern (

Fig. S6

). We next examined how phase transitions governed by

influence this restricted structural similarity. The changes of synapse strength and indegree at the stable state after the transient are both minimized near the critical state (

Fig. 7B

), which thus has enhanced reliable representation. Overall, we showed that SOC contributes to reliable representations in changing networks by restricting the drift of connection structures and response dynamics.

Structural mechanism underlying restricted representation.

(

A

) The change in synaptic weight patterns (

ΔW

, blue) and the change in inhibitory degree (

ΔC

, red) for each excitatory neuron over time in the SOC network (

= 6

ms

). Both

ΔW

and

ΔC

rapidly stabilize after a brief period of initial fluctuations. (

B

) The static state value of

ΔW

and

ΔC

as the functions of

. The near-critical state (

= 6

ms

) exhibited the lowest

ΔW

and

ΔC

indicating that the network structure is most stable at criticality.

Discussion

A key question in understanding neural representations across a long timescale is how neural networks maintain reliable response patterns under the inevitably plasticity induced by the selforganized and highly variant spontaneous dynamics. Here, we propose a self-organized criticality neural network model capable of reproducing both spontaneous critical states and representational drift with restricted representational geometry manifesting representational reliability, as observed experimentally. Our analysis suggests that the critical state constrains the fluctuations of network connectivity within a narrow regime, thereby facilitating reliable lowdimensional representation despite ongoing plasticity.

In our study, SOC in an excitatory-inhibitory (E-I) network is achieved through biologically plausible homeostatic plasticity of inhibitory connections [

22

,

35

]. The concept of inducing SOC via homeostatic plasticity has previously been explored in various model frameworks [

23

,

24

], as homeostatic plasticity inherently adjusts network excitability towards a stable value which can be put near the critical bifurcation point. In our implementation, inhibitory spike-timing-dependent plasticity (iSTDP) is employed to realize homeostatic plasticity, similar to previous computational studies, by modulating network excitability through inhibitory synapse [

35

]. SOC emerges in our model when the decay timescale of inhibitory receptors is moderate, controlling the co-activation of excitatory neurons [

39

]. The bifurcation observed here likely corresponds to a transition from asynchronous (

Fig. S3

, blue) to synchronous (

Fig. S3

, red) network states. In a simplified E-I model without plasticity, this control parameter has been shown to induce a Hopf bifurcation, where irregular spikes of individual neurons coexists with the scale-free avalanches [

33

,

34

,

38

].

Representational drift, which challenges the traditional assumption of stable neuronal tuning over prolonged periods, has been reported across multiple brain regions, species, recording techniques, and spatial scales [

6

,

7

]. Drift in individual neuronal responses and population response vectors has been commonly observed in sensory cortices, including the visual [

8

] and olfactory cortices [

9

], higher-order regions such as the posterior parietal cortex [

5

], and subcortical structures like the hippocampus [

40

] in mice, and also including the primary visual cortex in humans [

11

]. While representational similarity and geometry of multiple signals also exhibit drift in the olfactory cortex [

9

], some studies indicate a relatively restricted geometry in the visual cortex and hippocampus [

8

,

11

,

40

]. This observation aligns with our analysis of experimental data from primary visual cortex (V1) during passive visual information representation. The inconsistency in the drift geometry across different brain regions remains poorly understood and may reflect inherent differences in regional stability or the increasing complexity of signal representation along the cortical hierarchy. A recent study using simultaneous multi-region recordings revealed heterogeneous stability across cortical areas during go/no-go tasks, with visual cortex representations remaining relatively reliable (as evidenced by higher accuracy of common decoders), whereas motor cortex representations exhibited relative instability [

10

]. Task complexity also influences representational stability: simple stimuli, such as drifting gratings, elicit reliable representations across sessions, whereas complex stimuli, such as natural movies, induce representational drift [

41

]. As the higher order brain regions in the cortical hierarchy carries more complex cognitive function [

42

,

43

], they could also contribute to the instability across sessions.

Our SOC model successfully reproduces the commonly observed representational drift while capturing the restricted representational geometry observed experimentally in visual cortex data. Combined with findings that random plasticity alone leads to purely representational drift and that optimal cross-session reliable low-dimensional representation occurs near criticality, our results suggest that the representational stability exhibiting heterogeneity across brain regions may reflect that the spontaneous states in different brain regions have different proximity to criticality [

44

,

45

].

How reliable task-related information emerges from variable neural representations? Two hypotheses have been proposed: the coding null-space hypothesis and the co-varying decoding hypothesis [

6

]. The coding null-space hypothesis suggests that representational drift occurs exclusively within task-irrelevant subspaces, which requires a supervised learning mechanism precisely counteracts random fluctuations to maintain orthogonality between drift and representation spaces. This strong assumption has been challenged by experimental evidence indicating that decoders trained across multiple sessions perform worse than session-specific decoders, implying drift occurs within task-relevant representational spaces as well [

46

]. Nevertheless, representational spaces indeed exhibit less drift compared to noise-variance spaces, highlighting their relative stability [

46

]. This relatively stable representational space could emerge from biologically plausible learning rules; for example, computational models employing Hebbian and anti-Hebbian rules to optimize representation similarity successfully reproduce representational drift [

12

], and recent study implementing the STDP for fast learning to reduce the representational drift [

47

]. A more intuitive analogy is training simple deep neural networks for image classification [

13

,

36

]: although the loss function converges to near-zero values, stochastic gradients yield multiple networks with similar performance but variable neuronal tuning curves, demonstrating representational drift alongside stable task performance. Unlike these models that mainly constrain task states without explicitly considering the contribution from spontaneous states, our SOC model regulates mean firing rate in the internal spontaneous state which constrains representational geometry in the task state. The mechanism is that the underlying network connection is driven into a regime avoiding the further accumulation of the drift and the changes in network connectivity strength in this regime is smallest at the SOC state. Instead of considering the perfectly stable representation space, we propose that a restricted representation space observed experimentally can be easily obtained by simple unsupervised plasticity rule that underlies the co-evolution of network connectivity and spontaneous activity. Alternatively, the covarying decoding hypothesis posits that despite changing representations, stable task performance can be maintained if downstream decoder networks receiving feedforward inputs adapt their synaptic connections via appropriate plasticity rules (e.g., Hebbian [

46

] or spike-timing-dependent plasticity [

48

]). In this context, our SOC model exhibits relatively stable yet flexible response pattern comparing to the noncritical states, potentially facilitating the rewiring in downstream decoder networks for a stale decoding.

Critical systems typically exhibit highly variable and sensitive dynamics [

32

], seemingly contradicting the requirement for stable representations. In the classical branching model, the critical state is characterized by maximal dynamic range and sensitivity to small perturbations [

26

,

27

] , as confirmed in in vivo experiments [

29

,

30

] . As expected, this simplest non-equilibrium model also exhibits minimal reliability to fixed input signals. Reliable critical states have been identified in E-I balanced networks, demonstrating stimulus-induced reductions in trialto-trial variability [

34

,

49

] and near-optimal low-dimensional representations [

33

]. Here, we extended this framework by incorporating homeostatic plasticity to achieve a reliable critical state, revealing constrained representational geometry at criticality. By showing that more variable synapse dynamics happen in either random shuffled plasticity or in the self-organized non-critical states, we highlight that the critical spontaneous dynamics in the E-I network provide an effective and reliable low-dimension representation for the downstream neurons to decode the stimulus against both the random background noise and the variation in the network connection due to the homeostatic plasticity.

Our model yields several experimentally testable predictions. First, the low-dimension critical representations can be directly validated by perturbing the cortex to drive it away from the critical regime, and measuring resultant changes in cross-session representation [

30

]. This perturbation can be done either pharmacologically [

30

], or through the visual depravation [

23

]. Second, advances in multi-region recording [

6

] allows further validation on whether heterogeneous representational stability across brain regions correlate with their locally distinct spontaneous dynamics [

44

,

50

]. In summary, integrating empirical data analysis and computational modeling, we demonstrated that representational drift and restricted representational geometry is modulated by spontaneous dynamics, and the apparently most variable critical state can counter-intuitively facilitate long-term reliability low-dimension representation. This framework bridges neural representation during the evoked state with spontaneous activity in the absence of stimulations, offering insights into how the baseline states of the brain may affect cognitive function and brain disorders. Future work may further study how the representational drift contributes to cognitive flexibility while maintaining performance stability, where critical spontaneous state may be highly relevant for its variability and sensitivity to perturbations in a constantly changing environment.

Methods

Experimental data

We utilized data from the Allen Institute’s Visual Coding 2-Photon dataset (

). This comprehensive dataset contains both spontaneous and stimulus-evoked neural activity recorded from mouse visual cortex across multiple sessions using two-photon calcium imaging [

8

]. Our study involved data from 78 mice, with recordings spanning three days (sessions). For the spontaneous activity, mice were presented a fixed gray screen (

0.248 ± 0.002

hours for each mouse). For evoked activity, mice were presented with repeated 30-second natural movie stimuli. The recorded neuron comes from the primary visual cortex across all layers, as descripted in [

36

]. To study spontaneous neural dynamics and due to short recording interval of the spontaneous state, we aggregated spontaneous activity recordings from all mice across all experimental sessions to perform avalanche analysis, providing a robust dataset for examining intrinsic network properties. To investigate external signal representation, we followed established preprocessing methods from previous studies [

8

,

36

]. Specifically, we divided each 30-second natural movie into 30 distinct clips, treating each 1-second clip as a unique signal type. For each clip, we calculated the population response vector during the corresponding time window, which served as the neural activity representation for that particular signal. When we evaluated the relationship between duration of days and representational similarity and representational geometry, we removed the cases with duration larger than 10, as there are fewer than three case for each large duration of days.

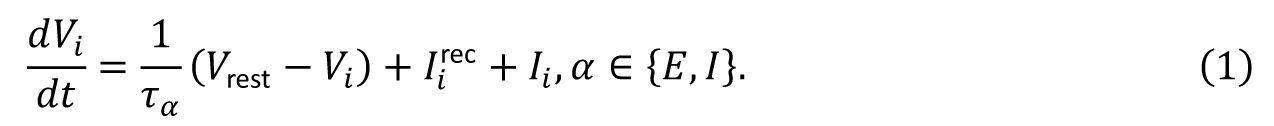

Spiking neural model

We consider an excitation–inhibition neural network with conductance-based leaky integrate-and-fire neural dynamics. This model can unify several biologically observations of neural dynamics across different scales, including the irregularity of individual neuronal spikes, weak synchrony of neuronal spiking, collective oscillations at the population level and scale-free avalanches, and it has also been used to study the neural response reliability [

33

,

34

]. Our model comprises

N

= 1000 randomly connected neurons with connection probability

p

= 0.2, where 80% of neurons are excitatory and 20% are inhibitory. The dynamics of system is determined by the membrane potential

V

i

of each neuron, where

In the absence of recurrent input

and external input

I

i

, the linear leak term will drive the dynamics into a resting potential at

V

rest

. When the neuron

i

receives enough drive and

V

i

growths beyond a given threshold

V

th

, a spike is then generated and transmitted to its output neighbors. We then reset the membrane potential to

V

reset

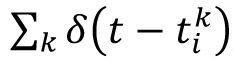

and the neuron enters into a refractory period of 2 ms (excitatory neuron) or 1 ms (inhibitory neuron). We described the spike activity of neuron i as the spike train

S

i

=

, where

is the

k

-th spike time for neuron

i

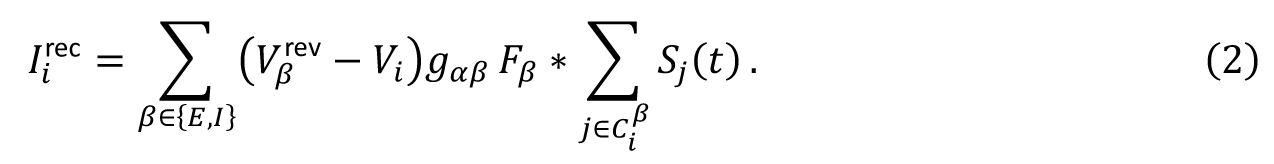

. In the conductance-based model, the recurrent input a neuron receives is modulated by the its own membrane potential,

V

i

, and is expressed as

Here,

is the reversal potential of the α (E or I) neuron and

g

αβ

is the synaptic strength from the

β

neuron type to the

α

neuron type,

refers to the input neighbor of neuron

i

, and

F

β

is the biexponential synaptic filter.

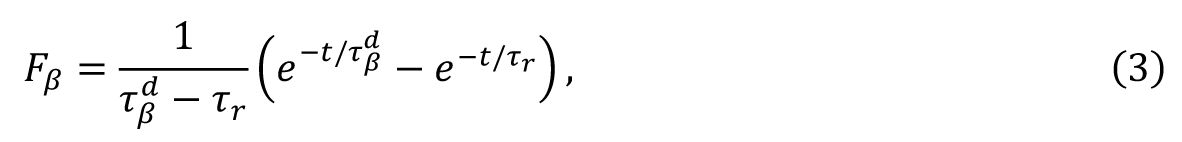

F

β

is expressed as

where

τ

r

and

are the synaptic rise time and decay time for

β

neurons, respectively. We set

τ

r

= 0.5 ms and

ms to reproduce the fast excitatory synaptic dynamics generated by the AMPA receptor. The

values are used as the control parameters for the critical transition, corresponding to the timescale of the GABA

A

receptor.

L

i

in

Eq. 1

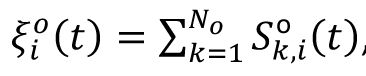

represents the background input from other circuits. Each neuron in the spontaneous state receives the

N

o

=

p ⋅ N

E

independent Poisson spike train,

S

Poisson

, at a frequency

Q

, as background and we labeled the spike train of background input targeting on neuron

i

as

; then,

I

i

is given by

Homeostatic plasticity

The homeostatic plasticity is implemented on the synapse from inhibitory neurons to excitatory neurons,

, where

j

represents the source inhibition neuron and i represents the target excitation neuron. We use the spike-timing-dependent plasticity rule, following study [

35

], where the synaptic strength

W

ij

updates to

W

ij

+ Δ

W

ij

as:

In this equation,

η

represents the learning rate,

r

0

represents the depression factor and

x

i

represents the synaptic trace as follows

This plasticity rule can be viewed as a mechanism to constrain the activity of excitatory neuron near the depression factor

r

0

: if (

x

i

—

r

0

) > 0 and inhibition neuron spikes, then the inhibition connection will be enhanced, and verse vice.

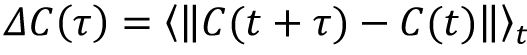

To investigate the dynamics of connectivity structure driven by the spontaneous activity, we calculated the change of synapse strength as

, here ‖·‖ represent the L1 distance, and we considered the change of indegree pattern as

, where

C

i

(t)

= Σ

j

W

ij

(t)

is the total synaptic strength of incoming inhibitory neurons of the excitatory neuron

i

.

To study the representation of external stimulus in our model, we defined the signal as the frozen Poisson spike train into the network. On top of the background input, each neuron is given by an additional spike train

, and

is the

k

th Poisson spike train on neuron i with the frequency

Q

o

. The stimulus signal lasts for 20 ms in the main text, which aligns with the characterized timescale of our model. We also change the duration of signal to examine the robustness of our results (

Fig. S5

).

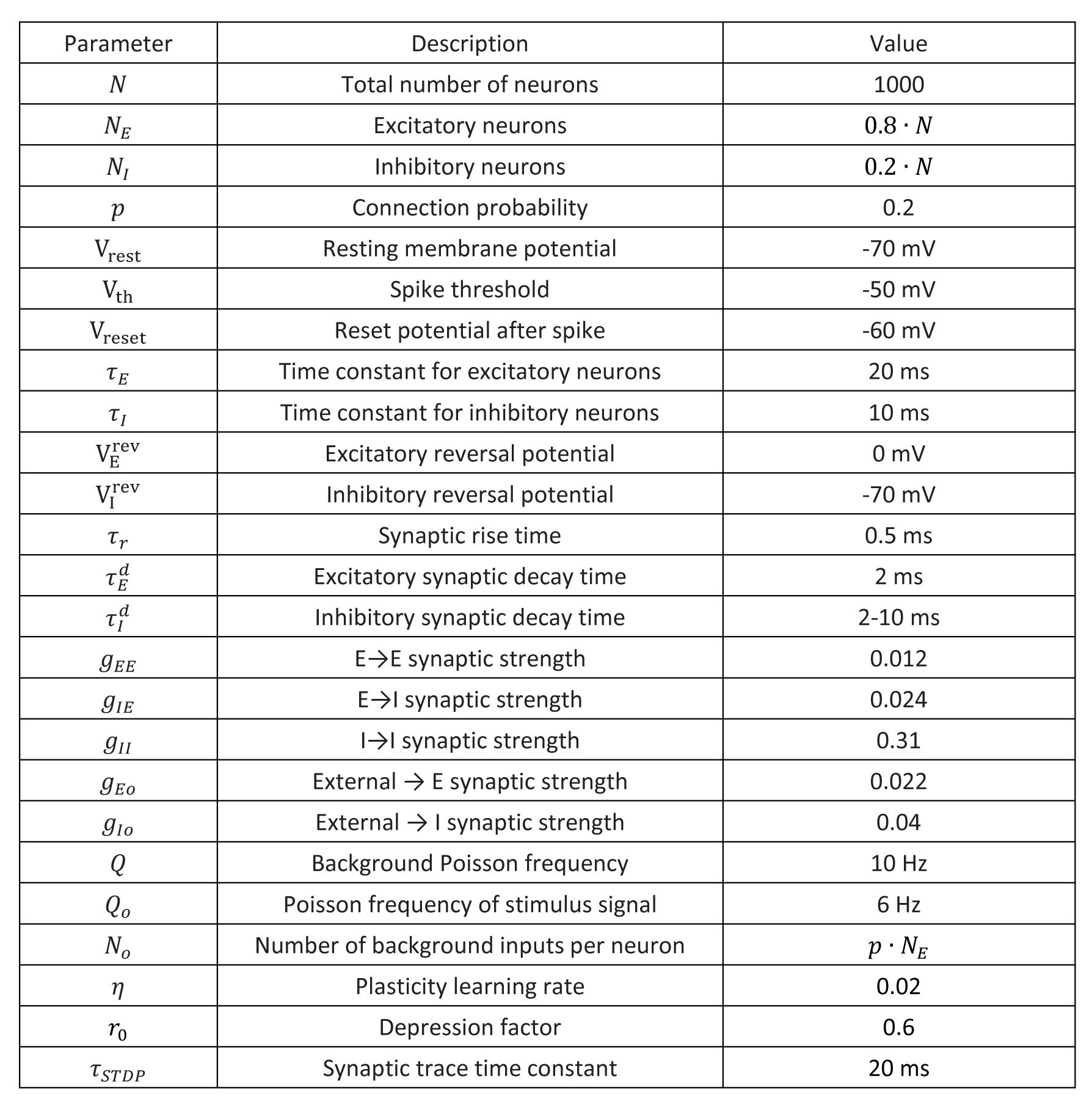

The parameters we used in the model simulation are listed in the following table. The relative strength of excitatory connection and inhibitory connection follows the previous studies [

38

,

51

]. The parameters about the plasticity is from previous study [

35

].

Measurement of avalanches and criticality

We evaluated neural avalanche properties in both experimental data and computational models. Since the experimental dataset consists of calcium signal time series rather than precise spike timing, we assessed avalanches from the mean time series

x(t)

following the methodology described in [

18

]. Specifically, we defined a threshold as

T

= 〈

x

〉 +

a

·

a

2

(

x

), where 〈

x

〉 represents the mean signal value,

σ

2

(x)

represents the variance of the signal, and

a

= 1 is a coefficient. We also scan the parameter

a

from

[0.5, 2]

, and find that our conclusions are robust. A neural avalanche was defined as a continuous period of activity exceeding this threshold. Avalanche duration

T

was measured as the length of this period, while avalanche size

S

was calculated as the summed above-threshold neural activity within this period. Using the Python package

powerlaw

[

52

], we estimated the optimal truncated power-law relationships:

P(T)~T

−α

and

P(S)~S

−τ

. According to crackling noise theory [

53

], the relationship between mean avalanche size 〈

S

〉 and for a given duration

T

follows 〈

S

〉(

T

) ~

T

^(1/

σvz

), where 1/σvz = (

τ

− 1)/(

ρ

− 1) for critical state. We numerically fitted the exponent 1/σvz from the avalanche data and then calculated the derivation coefficient from criticality [

23

] as DCC = |1/σvz − (

τ

− 1)/(

ρ

− 1)| . In agreement with previous study [

23

,

54

], we called a system is critical or near critical, if the scale-free avalanche, where the avalanches follow the three relations

P(T)

~

T

−α

,

P(S)~S

−τ

, 〈

S

〉(T) ~

T

^(1/

σvz

), and DCC~0 is found in this system.

In our numerical simulations of spiking networks, we evaluated spike-based neural avalanches by first aggregating spike trains from all excitatory neurons, then converting this combined record into a vector where each element represented the spike count within a time window. The window size was set to bin = 1/(ISI), where ISI denotes the average inter-spike interval of the aggregated spike trains of all the neurons, following previous studies [

16

,

38

]. Within this vector, a neural avalanche was defined as a sequence of consecutive non-zero bins. Avalanche duration was determined by the length of this sequence, while avalanche size was measured as the total spike count within sequence. The analysis of neural avalanches and criticality is the same as the above experimental data.

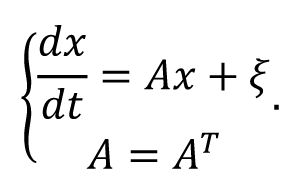

Estimate the effective connectivity from the neural activity

In generally, inferring the underlying the network structure/effective connectivity from the activity is quite challenge, and there are some different well-established methods [

55

]. We here adopt a simple solution by assuming the linearity of dynamics where neural activity

x

operates near the noise-driven steady state, and each node is driven by the independent Gaussian white noise with unit variance and the reciprocal structural interaction with other connected nodes. These assumptions give rise to the following equation of the dynamics:

The covariance matrix of the independent white noise driven activity

P

=

xx

T

follows the Lyapunov equation

AP

+

PA

T

=

I

[

56

]. Under the symmetry assumption of the structural/effective connectivity matrix A, we obtain

A

= P

−1

/2. For both experimental data and balanced model, we used the covariance matrix of neural activity to approximate

P

, and then solved the estimated structural/effective connectivity matrix

A

.

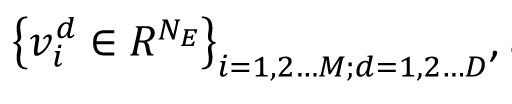

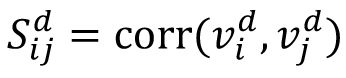

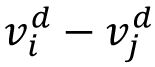

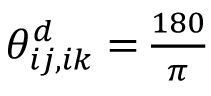

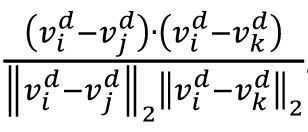

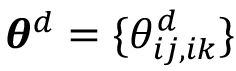

Measurement of representation similarity and representation geometry

Following the previous studies [

9

,

36

], we examined how the relationship between representations of multiple signals change with the time. We label the population response vector of each signal across different trial as

and

M

represents the number of signals and D’ represents the number of sessions. The representation similarity on each session is given by matrix

, and the correlation between the representation similarity matrices across

Δd

session in

Fig 3E

is given by the 〈corr (vec(

S

d

) vec(

S

d+Δd

))〉

d

.

In the representational space spanned by the population response vectors where each signal is represented by a node, the geometric relationship among the signals are represented by the edges between the nodes, namely, the difference between each population response vector pair of the signals,

(edge between the signal

i

and signal

j

in

Fig. 2F

) in a given session

d.

If the geometric relationship is stable across sessions, then the angle between any two edges will be preserved. The angle (with unit in degree) between two edges

ij

and

ik

(see illustration in

Fig. 2F

) is calculated as

arccos

. The overall representational geometry is denoted by the vector consisting of all angles as

, and the distance between the representational geometry across Ad sessions in

Fig 2G

is given by the 〈‖θ

d

— θ

d+Δd

‖

2

〉

d

.

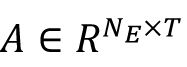

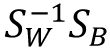

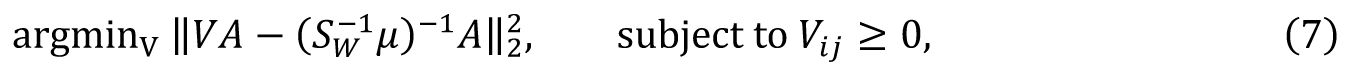

Linear discrimination analysis

To evaluate low dimension neural representation, we employed Linear Discriminant Analysis (LDA). In the model simulation, our mean population response matrix

was associated with signal labels

L ∈ Z

1×T

, where

L

i

∈ [1,M], and

M

represents the number of distinct stimuli or classes (

M

=10 in our study). Here

N

E

represent the number of excitatory neurons, and

T =T

0

*M

, where

T

0

represents the trial number of each stimulus (

T

0

=100 in our study). The fundamental idea of the LDA is to addresses the eigenvalue problem of

, where

S

W

and

represent the averaged within-class and between-class co-variance matrices, respectively. Here we utilized the coefficient matrix given by

, where

with

μ

m

represents the mean activity vector for class m. and

represent the mean activity vector of all labels. This coefficient matrix corresponds to the linear combination of eigenvectors of

and provides subspaces that effectively separate each class from others. For enhanced visualization (

Fig. 2C

,

Fig. 4C

, and

Fig. 6C

), we implemented t-SNE dimensionality reduction to obtain low-dimensional representations of the learned activity patterns

. We evaluated our LDA performance using 5-fold cross-validation to ensure robust assessment of its generalization capabilities.

Intuitively, we can regard the LDA as finding a learning subspace

to decode the mean population response matrix

A,

and the

can be understood as the synapse strength from the excitatory neurons to the downstream decoding neural population. Since excitatory neurons only project excitatory synapses with positive strength, we incorporated a non-negative constraint on the learned coefficients using non-negative linear regression. This involved solving:

which effectively replace the original coefficient matrix by a non-negative one. While this non-negative LDA performed worse than standard LDA on training data, it demonstrated significantly superior generalization on test data. Then, similar LDA method is also used to analyze the experimental data (

Fig. 6C

).

Supplementary figures

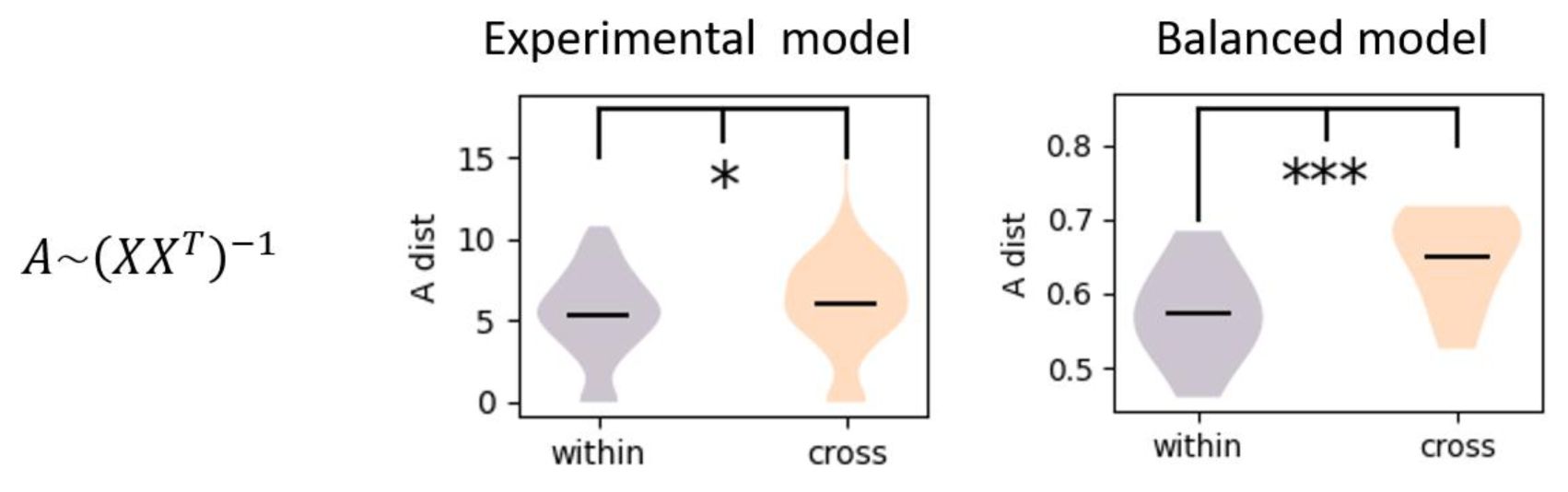

The estimated effective connectivity from neural activity using inverse of co-variance matrix.

Both experimental data (two sample t test, p ≈ 0.03 < 0.2) and balanced model (two sample t test, p ≈ 0) show larger change in effective connectivity for the cross-session than the within-session.

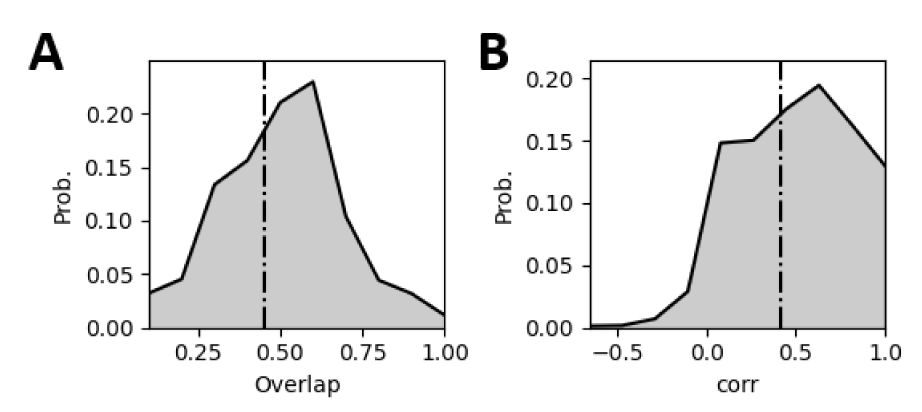

The statistics of representational drift of the experimental data.

The overlap refers to the overlap ratio of the active neurons under the same signal across different sessions, and the correlation refers to the correlation between the population response vectors under the same signal across different sessions. The statistics of response pattern is based on 78 mice and 30 signals.

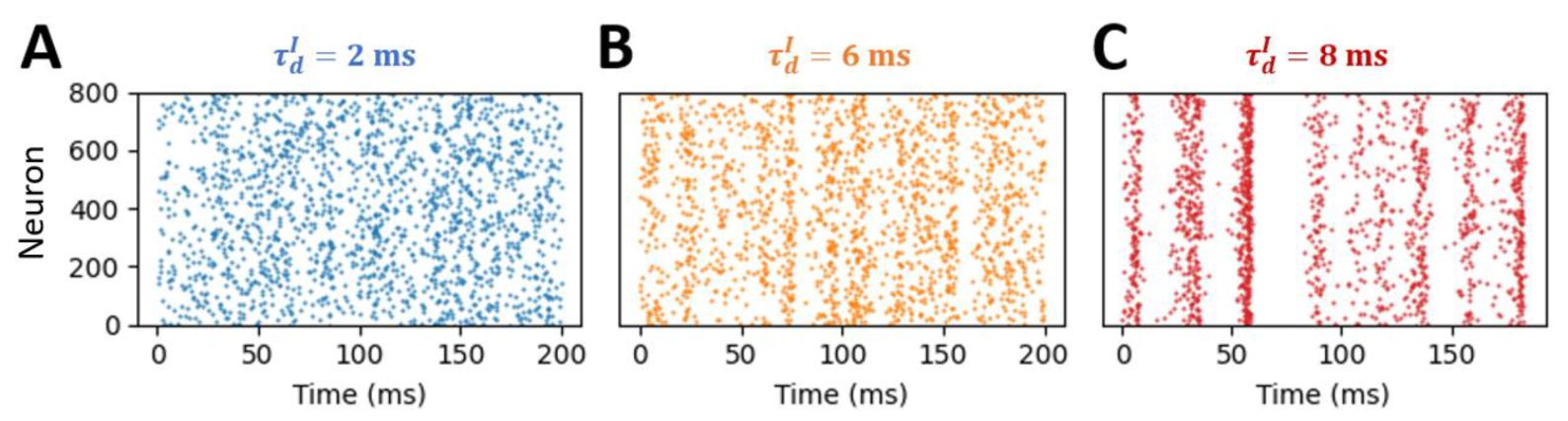

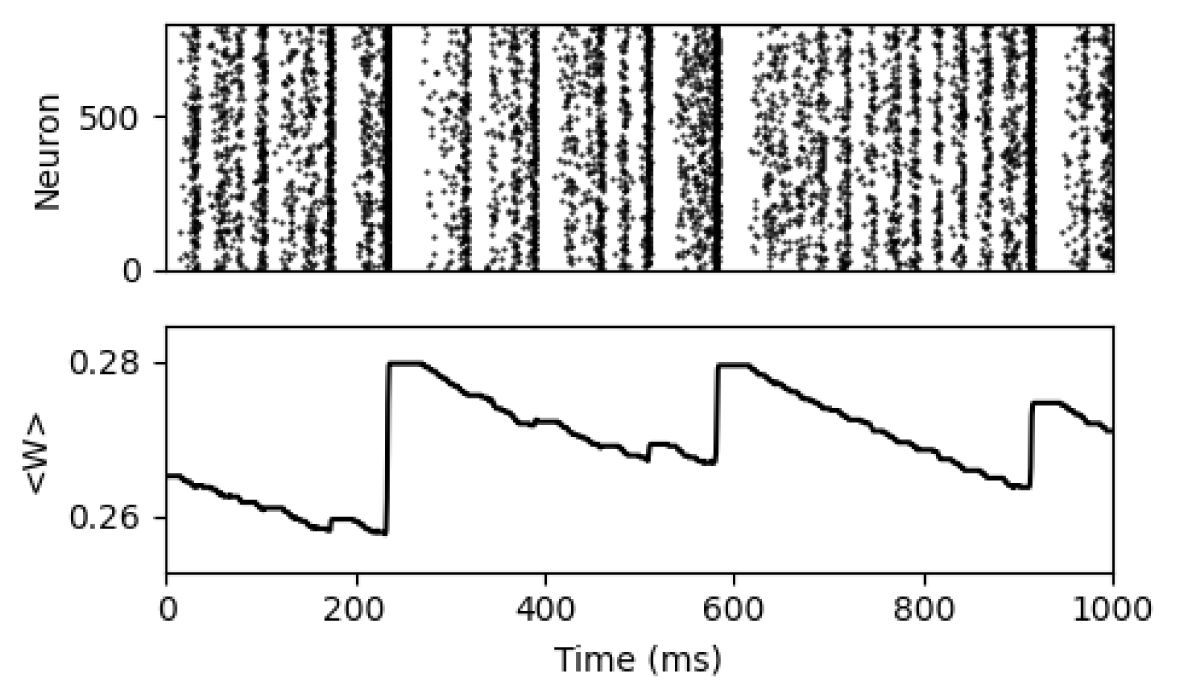

Raster plots of plasticity model in different state.

The blue, orange, and red raster plots correspond to spikes of the excitatory neurons in the subcritical (

), critical state (

), and supercritical state (

), respectively.

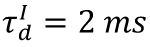

Raster plot of the plasticity model at the supercritical state with

and the corresponding dynamics of the average synapse strength.

Accuracy of different decoders with different input durations.

We use two decoders to evaluate the reliability of signal representation across sessions. For common decoder, we put the neural response from different sessions together, and used the LDA analysis to obtain the decoder from the training set, then evaluated the accuracy on the test set. For the transfer decoder, we used the neural response in one session to obtain the LDA-based decoder, and examined the decoding accuracy on the neural response in another session. The different color refers to different signal durations, and vertical black dash line indicates the critical state.

The synapse strength and indegree patterns of the shuffled plasticity model.

We use the same shuffled plasticity model as the

Fig. 5

. Since the

ΔW

in each step follows the random distribution, then the distance of synapse strength and indegree patterns naturally increase with the

ΔT

.

Data availability

Data used in

Figure 1

and

Figure 2

come from the

. Other results in the current manuscript comes from the numerical simulation, the code used for numerical simulation will be available in

.

Acknowledgements

We thank Prof. Daqing Guo and Prof. Pulin Gong for providing critical comments to our study.

Additional information

Funding

This work was supported in part by STI 884 2030-Major Projects (2022ZD0208500) and the Hong Kong Research Grant Council (CRF C4012-22G, GRF 12201421).

Author contributions

Conceptualization: Z. Yang and C. Zhou. Methodology: Z. Yang, J. Liang and C. Zhou. Investigation: Z. Yang and C. Zhou. Visualization: Z. Yang, J. Liang, W Yung and C. Zhou. Supervision: C. Zhou. Writing—original draft: Z. Yang. Writing—review and editing: Z. Yang, J. Liang, W Yung and C.

Zhou. Resources: C. Zhou; Data curation: Z. Yang. Validation: Z. Yang. Formal analysis: Z. Yang. Software: Z. Yang. Project administration: C. Zhou. Funding acquisition: W Yung and C. Zhou.

Funding

Hong Kong Government (HK) (STI 884 2030-M)

Changsong Zhou

Hong Kong Government (HK) (2022ZD0208500)

Changsong Zhou

Hong Kong Government (HK) (GRF 12201421)

Changsong Zhou

Hong Kong Government (HK) (CRF C4012-22G)

Wing-Ho Yung

References

[1]

Spontaneous fluctuations in brain activity observed with functional magnetic resonance imaging

Nat. Rev. Neurosci.

8

:700

Google Scholar

[2]

Spontaneous cortical activity is transiently poised close to criticality

PLOS Comput. Biol

13

:e1005543

Google Scholar

[3]

The secret life of predictive brains: what’s spontaneous activity for?

Trends Cogn. Sci.

25

:730

Google Scholar

[4]

The predictive nature of spontaneous brain activity across scales and species

Neuron

S0896627325001278

Google Scholar

[5]

Dynamic Reorganization of Neuronal Activity Patterns in Parietal Cortex

Cell

170

:986

Google Scholar

[6]

Causes and consequences of representational drift

Curr. Opin. Neurobiol.

58

:141

Google Scholar

[7]

The brain in motion: How ensemble fluidity drives memory-updating and flexibility

eLife

9

:e63550

Google Scholar

[8]

Representational drift in the mouse visual cortex

Curr. Biol.

31

:4327

Google Scholar

[9]

Representational drift in primary olfactory cortex

Nature

594

:541

Google Scholar

[10]

Emergent reliability in sensory cortical coding and inter-area communication

Nature

605

:713

Google Scholar

[11]

Representations in human primary visual cortex drift over time

Nat. Commun.

14

:4422

Google Scholar

[12]

Coordinated drift of receptive fields in Hebbian/anti-Hebbian network models during noisy representation learning

Nat. Neurosci

:1

Google Scholar

[13]

Stochastic Gradient Descent-Induced Drift of Representation in a Two-Layer Neural Network

arXiv

Google Scholar

[14]

Synaptic Plasticity: Multiple Forms, Functions, and Mechanisms

Neuropsychopharmacology

33

:18

Google Scholar

[15]

Homeostatic plasticity in the developing nervous system

Nat. Rev. Neurosci.

5

:97

Google Scholar

[16]

Neuronal Avalanches in Neocortical Circuits

J. Neurosci.

23

:11167

Google Scholar

[17]

Criticality between Cortical States

Phys. Rev. Lett.

122

:208101

Google Scholar

[18]

Lowdimensional criticality embedded in high-dimensional awake brain dynamics

C E N C E V N C E S

[19]

Criticality in the brain: A synthesis of neurobiology, models and cognition

Prog. Neurobiol.

158

:132

Google Scholar

[20]

25 years of criticality in neuroscience — established results, open controversies, novel concepts

Curr. Opin. Neurobiol.

58

:105

Google Scholar

[21]

Self-organized criticality

Phys. Rev. A

38

:364

Google Scholar

[22]

Self-Organization Toward Criticality by Synaptic Plasticity

Front. Phys.

9

:619661

Google Scholar

[23]

Cortical Circuit Dynamics Are Homeostatically Tuned to Criticality In Vivo

Neuron

104

:655

Google Scholar

[24]

Homeostatic Plasticity and External Input Shape Neural Network Dynamics

Phys. Rev. X

8

:031018

Google Scholar

[25]

Homeostatic plasticity and emergence of functional networks in a whole-brain model at criticality

Sci. Rep.

8

:15682

Google Scholar

[26]

Optimal dynamical range of excitable networks at criticality

Nat. Phys.

2

:348

Google Scholar

[27]

Griffiths phases and the stretching of criticality in brain networks

Nat. Commun.

4

:2521

Google Scholar

[28]

Maximal Variability of Phase Synchrony in Cortical Networks with Neuronal Avalanches

J. Neurosci.

32

:1061

Google Scholar

[29]

Adaptation towards scale-free dynamics improves cortical stimulus discrimination at the cost of reduced detection

PLOS Comput. Biol

13

:e1005574

Google Scholar

[30]

Maximizing Sensory Dynamic Range by Tuning the Cortical State to Criticality

PLOS Comput. Biol

11

:e1004576

Google Scholar

[31]

How critical is brain criticality?

Trends Neurosci.

45

:820

Google Scholar

[32]

Excitation-Inhibition Balance, Neural Criticality, and Activities in Neuronal Circuits

The Neuroscientist

31

:31

Google Scholar

[33]

Critical Avalanches in Excitation-Inhibition Balanced Networks Reconcile Response Reliability with Sensitivity for Optimal Neural Representation

Phys. Rev. Lett.

134

:028401

Google Scholar

[34]

Criticality enhances the multilevel reliability of stimulus responses in cortical neural networks

PLOS Comput. Biol

18

:e1009848

Google Scholar

[35]

Inhibitory Plasticity Balances Excitation and Inhibition in Sensory Pathways and Memory Networks

Science

334

:1569

Google Scholar

[36]

The geometry of representational drift in natural and artificial neural networks

PLOS Comput. Biol

18

:e1010716

Google Scholar

[37]

Network-centered homeostasis through inhibition maintains hippocampal spatial map and cortical circuit function

Cell Rep.

36

:109577

Google Scholar

[38]

Hopf Bifurcation in Mean Field Explains Critical Avalanches in Excitation-Inhibition Balanced Neuronal Networks: A Mechanism for Multiscale Variability

Front. Syst. Neurosci.

14

:580011

Google Scholar

[39]

Cortical Entropy, Mutual Information and Scale-Free Dynamics in Waking Mice

Cereb. Cortex

26

:3945

Google Scholar

[40]

Time and experience differentially affect distinct aspects of hippocampal representational drift

Neuron

111

:2357

Google Scholar

[41]

Stimulus-dependent representational drift in primary visual cortex

Nat. Commun.

12

:5169

Google Scholar

[42]

A Large-Scale Circuit Mechanism for Hierarchical Dynamical Processing in the Primate Cortex

Neuron

88

:419

Google Scholar

[43]

Hierarchical Heterogeneity across Human Cortex Shapes Large-Scale Neural Dynamics

Neuron

101

:1181

Google Scholar

[44]

The dynamics of resting fluctuations in the brain: metastability and its dynamical cortical core

Sci. Rep.

7

:3095

Google Scholar

[45]

Quasiuniversal scaling in mouse-brain neuronal activity stems from edge-of-instability critical dynamics

Proc. Natl. Acad. Sci

120

:e2208998120

Google Scholar

[46]

Stable task information from an unstable neural population

eLife

9

:e51121

Google Scholar

[47]

Representational drift and learning-induced stabilization in the piriform cortex

Proc. Natl. Acad. Sci

122

:e2501811122

Google Scholar

[48]

Drifting assemblies for persistent memory: Neuron transitions and unsupervised compensation

Proc. Natl. Acad. Sci

118

:e2023832118

Google Scholar

[49]

Stimulus onset quenches neural variability: a widespread cortical phenomenon

Nat. Neurosci.

13

:369

Google Scholar

[50]

Long-Range Amplitude Coupling Is Optimized for Brain Networks That Function at Criticality

J. Neurosci.

42

:2221

Google Scholar

[51]

What determines the frequency of fast network oscillations with irregular neural discharges? I. Synaptic dynamics and excitation-inhibition balance

J. Neurophysiol.

90

:415

Google Scholar

[52]

powerlaw: A Python Package for Analysis of HeavyTailed Distributions

PLoS ONE

9

:e85777

Google Scholar

[53]

Crackling noise

Nature

410

:6825

Google Scholar

[54]

Sleep restores an optimal computational regime in cortical networks

Nat. Neurosci.

27

:328

Google Scholar

[55]

Functional and Effective Connectivity: A Review

Brain Connect.

1

:13

Google Scholar

[56]

Stochastic Processes in Physics, Chemistry, and Biology

Berlin, Heidelberg

:

Springer

Google Scholar

Allen Brain Observatory two-photon Ca2+ imaging and Neuropixels datasets

Allen Brain Institute

Article and author information

Author information

Cite all versions

You can cite all versions using the DOI

10.7554/eLife.109546

. This DOI represents all versions, and will always resolve to the latest one.

Copyright

© 2026,

Yang et al.

This article is distributed under the terms of the

Creative Commons Attribution License

, which permits unrestricted use and redistribution provided that the original author and source are credited.

Metrics

views

0

downloads

0

citations

0

Views, downloads and citations are aggregated across all versions of this paper published by eLife.